Getting your B2B SaaS brand cited by ChatGPT requires understanding the specific retrieval pipeline ChatGPT uses: Bing's search index, structured content extraction, and a freshness weighting that penalizes stale content. This playbook breaks the pipeline into four stages, walks through the exact technical moves for each stage, and shows the measurement stack that tells you whether the work is moving the needle. It is written for SaaS teams who have the technical capability to ship changes but need a concrete sequence, not another abstract GEO overview.

Introduction

A Head of Growth at a Series B SaaS company tried ten different category queries in ChatGPT last week. Her product was not mentioned in any of them. Not once. Her three closest competitors were cited in six out of ten, sometimes with full descriptions of features her product actually does better. She has spent the past eighteen months building a content operation, a category POV, and a solid analyst relations motion. Her SEO traffic is up 60% year-over-year. And ChatGPT treats her brand as if it does not exist.

This is happening to a specific kind of SaaS brand at scale in 2026: strong on traditional SEO, healthy content operation, decent analyst coverage, and yet invisible in ChatGPT's answers. The root cause is usually not a content problem. It is a pipeline problem. Content exists. Content is indexed by Google. Content is not being picked up by Bing's index the way it needs to be, is not being extracted in a format ChatGPT retrieves, or is losing its freshness weighting faster than it should.

This playbook walks through the four stages of ChatGPT's citation pipeline and what you do at each stage to move from invisible to cited. It is specifically for B2B SaaS. It assumes you have shipped code before. It names tools, specifies settings, and stays concrete throughout.

Quick Summary

How Does ChatGPT Actually Find Your Brand?

ChatGPT's retrieval for brand-related answers runs through four sequential stages. Every B2B SaaS brand that gets cited has passed all four. Every brand that is invisible has failed at one of them.

Stage 1: Bing Index Entry. ChatGPT uses Bing's search index, not Google's, when it performs live retrieval for category queries. If your content is not in Bing's index, or is indexed weakly, the pipeline stops here. This is the single most common failure mode for B2B SaaS brands that rely on Google-first SEO without deliberate Bing work.

Stage 2: Structured Extraction. Once Bing has your content, the question becomes whether ChatGPT can extract a clean, self-contained answer from it. ChatGPT does not render JavaScript, does not parse JSON-LD structured data during live fetch, and prioritizes visible HTML with clear semantic structure. Content hidden behind JavaScript tabs, sliders, or dynamic components often fails this stage silently.

Stage 3: Retrieval Match. When a user asks ChatGPT a category question, ChatGPT's retrieval algorithm matches the query to its indexed corpus. Match quality depends on entity clarity (does the model understand what your brand is and what category it belongs to), source diversity (is your brand corroborated across multiple sources or only visible on one domain), and query-format alignment (does your content structure match how the user phrased the question). Brands that exist on only their own domain and nowhere else fail this stage often.

Stage 4: Citation Rendering. The final stage is whether ChatGPT chooses to cite you explicitly or synthesizes your content without attribution. FAQ-formatted content, numbered lists, and clean definitional sections get cited with attribution more often than narrative content. This is partially cosmetic, but the attribution is what drives downstream brand visibility compound.

Why Is Bing the Gateway to ChatGPT Citations?

This is the part most B2B SaaS teams underestimate. The mechanics are specific.

ChatGPT's live web search, when enabled by the user or triggered automatically by query type, runs through Bing's Web Search API. The same underlying pipeline powers Microsoft Copilot and several other AI products. This is not a casual integration. It is an exclusive index relationship, driven by OpenAI's multi-year cloud deal with Microsoft Azure.

For B2B SaaS brands, this has direct implications. If your Bing indexing is thin (common, since most SEO teams optimize for Google and let Bing "follow along"), your ChatGPT visibility is capped regardless of how good your content is. Bing Webmaster Tools sign-up, sitemap submission, and URL Inspection are effectively ChatGPT visibility tools now, not just Bing SEO tools.

The gap shows up in two places. First, indexation coverage: many SaaS sites have 80% of pages indexed in Google and 40% in Bing. Second, retrieval frequency: Bing is more aggressive than Google at deprioritizing pages that have not been recrawled recently, which tightens the freshness constraint.

On February 10, 2026, Microsoft launched AI Performance reporting in Bing Webmaster Tools in public preview. This is the first direct view publishers have into how Microsoft Copilot and ChatGPT retrieval draw from their content. Setting it up is free. Ignoring it is leaving the measurement layer on the table.

What Should You Configure in Your robots.txt First?

The robots.txt audit is the first technical deliverable. Most B2B SaaS sites are either too restrictive (blocking crawlers that would help them) or too permissive (not thinking about the configuration at all). A permissive, documented configuration helps.

The AI crawlers worth explicitly allowing for B2B SaaS in 2026 are: GPTBot (OpenAI training), OAI-SearchBot (ChatGPT search-time retrieval), ChatGPT-User (on-demand fetch when a user asks a question), PerplexityBot, Perplexity-User, ClaudeBot (Anthropic training), Claude-SearchBot (Claude retrieval), Google-Extended (Gemini), Googlebot (standard), Bingbot (the direct gateway to ChatGPT), Applebot and Applebot-Extended, CCBot (Common Crawl, which seeds many LLMs), and Amazonbot.

A reasonable robots.txt configuration for a B2B SaaS site does not need to be long. Something like:

User-agent: *

Allow: /

User-agent: GPTBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: Google-Extended

Allow: /

Sitemap: https://yourdomain.com/sitemap.xml

The explicit Allow directives look redundant next to the wildcard, but they communicate intent. When a new AI engine launches and Microsoft, Google, or a third party comments on your robots.txt choices, the explicit directives are audit-friendly.

How Do You Submit Your Content via IndexNow?

This is the single highest-leverage move in the playbook and the one most B2B SaaS teams have not done.

IndexNow is an open protocol that lets your site notify Bing (and other participating engines) of new or updated content within seconds, instead of waiting for the crawler to discover the change organically. Because ChatGPT retrieves through Bing's index, notifying Bing faster means appearing in ChatGPT answers faster.

The data on IndexNow speed differential is clear. With IndexNow active, Bing re-crawls updated pages within 3 to 7 days and citation changes appear in ChatGPT retrieval within 5 to 10 days. Without IndexNow, the same recrawl-to-citation cycle takes 1 to 4 weeks depending on site authority and update frequency. That is a 3x to 4x acceleration for a couple of hours of engineering work.

Implementation for the common B2B SaaS stacks:

Next.js or custom React stack. Generate a random 32-character hex key. Place a text file at /{key}.txt at your domain root, containing only the key. On every content publish or update, send a POST request to https://api.indexnow.org/indexnow with your key and the URL list. A webhook from your CMS or a build-time script works.

WordPress. Install the IndexNow plugin. It handles key generation, file placement, and automatic submission on post publish or update. Ten minutes, zero custom code.

Webflow or similar hosted CMS. Use a Zapier or Make integration to post to the IndexNow API on CMS webhook. Slightly more setup, but still under an hour.

Shopify. Built-in IndexNow support via the Admin API. Enable it in Preferences and the pings happen automatically.

Verify your setup through Bing Webmaster Tools URL Submission. If your first few IndexNow pings are accepted, the rest will be.

How Does Bing Webmaster Tools AI Performance Let You Measure Citations?

This is the measurement layer Microsoft introduced in February 2026, and it is the closest thing to a direct ChatGPT analytics product available in 2026.

What it tracks for each domain you verify: total citations across Microsoft Copilot and partner AI products, average cited pages per day, grounding queries (the queries where your content was used to ground an AI answer even if you were not cited by name), page-level citation activity (which specific URLs are being cited), and citation trends over 30-day windows.

What it does not track: ChatGPT-specific citations are not exposed as a separate feed, because Microsoft groups them under the broader AI Performance umbrella. But because the underlying index is the same, the trends you see in Bing AI Performance are directional for ChatGPT citation behavior.

How to use it weekly. Open the Bing Webmaster Tools dashboard, navigate to AI Performance, and review three numbers: total citations (are they trending up week-over-week), page-level citation activity (which pages are pulling weight and which are not), and citation trend (is the 30-day rolling average growing). This is your ChatGPT-and-Copilot visibility signal.

Which Content Formats Get Cited Most Often by ChatGPT?

Not all content performs equally in ChatGPT retrieval. Some formats are structurally more extractable than others.

The implication for B2B SaaS content operations: high-value commercial pages (pricing, product, category comparison) should be optimized for extractability, not just conversion. This sometimes means adding a clear text description above the form-gated demo, or rewriting product pages to lead with extractable definitions rather than marketing hero copy.

How Often Should You Refresh Cited Content?

Freshness is the silent killer of ChatGPT citation portfolios. Content that was cited in month one often drops out of citation rotation in month two if nothing has been updated.

The internal data we track shows a ChatGPT citation half-life of roughly 45 days for B2B SaaS category content. That means the average cited page loses half of its citation volume 45 days after last meaningful update. Some content holds longer (general definitional content on slow-moving topics can hold 60 to 90 days). Some decays faster (stats-heavy content on fast-moving topics can decay in 30 days).

The refresh playbook:

Day 30 after publish. Update any stats that are older than 90 days. Check dateModified is exposed in the published page and in the Article schema. Push through IndexNow. This is the minimum intervention to keep the page inside the citation window.

Day 60 after publish. Substantive update. Add any new developments in the topic since publication. Expand one section with additional research or a new example. Refresh the FAQ at the bottom with one new question based on what you have learned from customer conversations. Push through IndexNow.

Day 90 after publish. Full refresh review. Does the content still reflect your current POV on the topic? Is the intro definition-first sentence still sharp? Have competitors published newer content that displaces your argument? A full refresh at day 90 is the difference between a pillar that compounds for a year and a pillar that stops producing by month four.

Skip this rhythm and you are rebuilding from zero every quarter. Keep it and you compound.

What Are the Most Common Technical Failures in B2B SaaS GEO Today?

Across the B2B SaaS audits we have run in the last year, the same seven failures show up repeatedly. Each one kills ChatGPT citation potential independently. A brand can be doing everything else right and still be invisible if any single one of these is broken.

Failure 1: Thin Bing indexation. The brand's SEO team has focused exclusively on Google and never set up Bing Webmaster Tools. Only 30 to 50% of pages are indexed in Bing. ChatGPT cannot cite what Bing has not indexed. Fix: Bing Webmaster setup, sitemap submission, URL Inspection on the 20 highest-priority pages.

Failure 2: No IndexNow integration. New or updated content takes 1 to 4 weeks to appear in Bing's index, and therefore in ChatGPT retrieval. Fix: implement IndexNow at the CMS layer.

Failure 3: JavaScript-rendered core content. The hero value proposition, the product description, or the pricing table is rendered via React hydration. ChatGPT does not render JavaScript. The brand's most important pages look empty to the retrieval pipeline. Fix: server-side render (SSR) or static-generate the critical above-the-fold content.

Failure 4: Gated content behind login or form. The most detailed product specs, case studies, and comparison guides are gated. ChatGPT cannot retrieve gated content. Fix: create unlinked public versions of the most citation-valuable pieces, or un-gate the content that is meant to be discoverable.

Failure 5: Stale content stuck at old dateModified. The brand's best pillar pages were published 18 months ago, have not been updated, and the dateModified field shows it. The content has dropped out of ChatGPT citation rotation because of freshness decay. Fix: 30 to 45 day refresh rhythm on the top 10 pillars.

Failure 6: Thin Person schema on authors. The Article schema exists but the Person schema under "author" has only a name. No sameAs array linking to LinkedIn, Crunchbase, Wikidata, or the personal site. The Growth Marshal data shows this pattern ranks lower than no Person schema at all. Fix: complete Person schema for every author with full sameAs arrays.

Failure 7: Single-source brand mentions. The brand's only mentions are on its own domain. No Reddit presence, no trade publication coverage, no LinkedIn Pulse cadence from the CEO or CMO. ChatGPT's retrieval algorithm weights multi-source presence heavily, and single-source brands rank poorly in category answers. Fix: structured earned-media plan targeting three types of sources simultaneously.

How Does the Pipeline Behave for Specific SaaS Categories?

Not every B2B SaaS category behaves the same way in ChatGPT's retrieval. Here is how the pipeline shifts across the three biggest SaaS verticals.

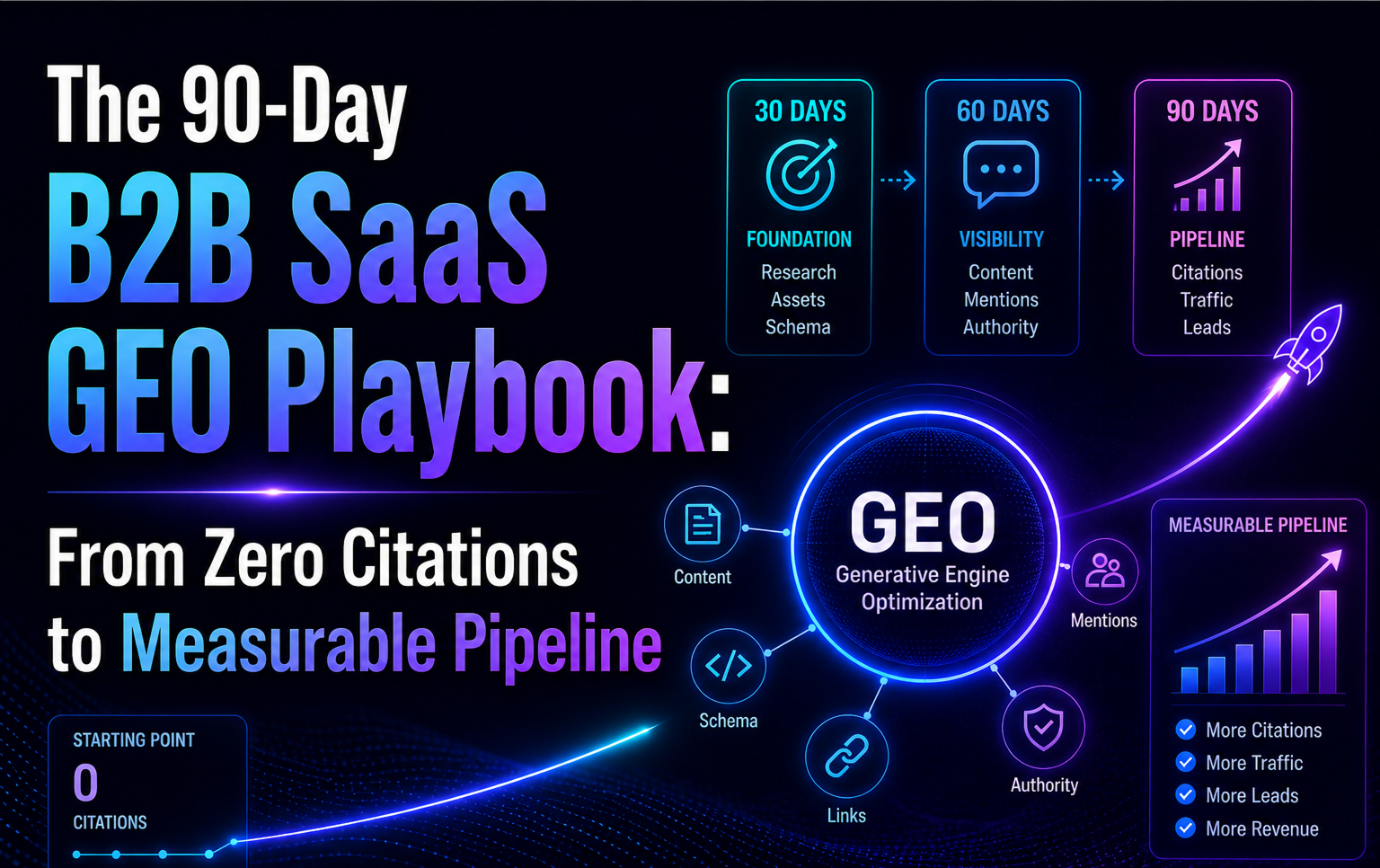

Horizontal B2B SaaS (CRM, marketing automation, project management). Highly competitive categories where ChatGPT has dozens of vendors in its training data. The citation game is about being in the top 5 to 7 that get cited in typical buyer queries. Winning requires strong third-party corroboration (Clutch, G2, named trade publication coverage) because ChatGPT's retrieval defaults to the safest, most-corroborated vendors. Content alone rarely breaks in. The 90-Day B2B SaaS GEO Playbook has the sequencing for this.

Vertical SaaS (healthcare compliance, legal tech, construction management). Less crowded categories where ChatGPT has a smaller set of trained vendors. The citation game is about dominating a specific niche term. Winning is faster here than in horizontal SaaS because fewer competitors are working the angle, but requires vertical-specific earned media (medical trade publications, legal tech conferences, etc.). For brands with compliance-heavy content, ChatGPT rewards neutrality and factual density.

Developer tools and APIs. A specific subcategory where ChatGPT's behavior is distinctive. Developer tool queries often trigger ChatGPT to retrieve documentation pages, GitHub repos, Stack Overflow answers, and Reddit threads directly. The winning strategy leans harder on technical documentation quality, public changelog visibility, and Stack Overflow or r/programming presence than on traditional marketing content. A brand with weak blog content but strong GitHub and Stack Overflow presence can outrank a brand with strong blog content but no developer community footprint.

A Short Case Study:

HermQ Moved From Invisible to Citable in 90 Days

To make this concrete, here is how one of our clients, HermQ (a health and wellness SaaS), moved through the pipeline over 90 days.

Starting state (day 0). Zero presence in ChatGPT category answers. Bing indexation at approximately 40%. No IndexNow. Thin schema. No LinkedIn Pulse activity from the founder. Reddit presence limited to one customer-facing subreddit.

Day 30. Bing Webmaster Tools set up, 85% indexation achieved. IndexNow integrated via CMS webhook. FAQPage schema deployed on five strategic pages. Article plus Person schema on every blog post with full sameAs arrays for the founder. First Peec AI baseline measurement captured.

Day 60. Four pillar articles published, each in the two-layer architecture format. Founder published two LinkedIn Pulse articles cross-referencing the pillars. Two thoughtful, non-promotional Reddit posts in relevant subreddits. IndexNow submissions processed by Bing within 3 to 5 days each.

Day 90. Measurable citation presence in Google AI Overviews for two category queries. First ChatGPT citations appearing in the Bing Webmaster Tools AI Performance dashboard. Monthly clicks up 55.7% (700 to 1,090). Position average from 19.9 to 13.1. Branded query clicks up 73.7%.

Day 120 (projection based on current trajectory). Expected first consistent ChatGPT citations on category queries. Expected Peec AI visibility lift from 0% to mid-single-digits across the tracked prompt panel.

The HermQ case is not extraordinary. It is the expected outcome when the pipeline work is done in the right order, on the right cadence, with measurement discipline. The case studies that exist across our client base are variations on the same underlying sequence.

How Do ChatGPT, Perplexity, Gemini, and Google AIO Differ in Retrieval?

If your priority is ChatGPT specifically, the strategic choices are clear. Invest in Bing Webmaster setup. Implement IndexNow. Optimize for long-form pillars with Reddit and LinkedIn amplification. Refresh on a 30 to 45 day rhythm. Skip the JavaScript-heavy interactive demos that Gemini would handle but ChatGPT cannot.

If ChatGPT is one of multiple priority engines (most common scenario), the playbook needs a second layer. The 90-Day B2B SaaS GEO Playbook covers the multi-engine sequence.

Key Takeaways

- ChatGPT retrieves through Bing's index, not Google's. Bing Webmaster work is ChatGPT visibility work.

- IndexNow cuts your content-to-citation time by 3x to 4x. Most B2B SaaS sites still do not use it.

- The ChatGPT citation half-life is roughly 45 days. Build a 30 to 45 day refresh rhythm or rebuild from zero every quarter.

- Bing Webmaster Tools AI Performance (launched Feb 2026) is the closest thing to a direct ChatGPT analytics product available. Free to use.

- Content formats that extract cleanly (pillars, listicles, FAQs, comparisons) outperform narrative content in ChatGPT retrieval.

Frequently Asked Questions (FAQs)

Does Google's index matter for ChatGPT citations at all?

Indirectly, yes. Google indexation is the foundation for most non-ChatGPT AI engines (Gemini, Google AI Overviews, and any search product that uses Google's API). Strong Google presence also tends to correlate with strong Bing presence because both engines crawl similar signals. But if you had to pick one index to optimize for ChatGPT specifically, it would be Bing. ChatGPT does not query Google directly.

How fast can a brand with no ChatGPT visibility today start seeing citations?

In our client data, 3 to 5 weeks from the start of the foundational technical work (Bing Webmaster, IndexNow, schema, llms.txt). Earlier for brands with existing third-party mentions in Reddit or trade publications. Later for brands starting entirely from zero with no earned media presence.

Is FAQ schema still the highest-impact schema type for ChatGPT?

For ChatGPT specifically, schema impact is indirect. ChatGPT does not parse JSON-LD during live retrieval. FAQ schema helps via Google Knowledge Graph, which then feeds other retrieval systems. For ChatGPT, the visible Q&A content on the page is what matters. FAQPage schema plus visible Q&A content is the strongest combination.

What if my B2B SaaS buyers use Perplexity or Gemini more than ChatGPT?

The playbook shifts. Perplexity rewards stat-dense content and aggressive freshness. Gemini rewards long-form content with named author schema and E-E-A-T signals. The core foundation (robots.txt, schema, refresh rhythm) stays the same. The amplification layer (Reddit distribution for ChatGPT, Google-indexed earned media for Gemini) diverges.

Can I measure ChatGPT citations for my own site without paid tools?

Yes, but with limitations. Bing Webmaster Tools AI Performance gives you most of the signal for free. A weekly manual audit (run 10 category queries through ChatGPT, log your brand presence) adds a second data point. What paid tools like Peec AI add is scale (hundreds of daily prompts, cross-engine comparison) and longitudinal consistency. For small teams, the manual audit plus Bing Webmaster is enough.

Does running ChatGPT ads affect my organic citation rate?

ChatGPT introduced advertising in 2025, with sponsored placements that appear alongside organic AI answers. The paid placements do not substitute for organic citations, and they do not directly improve organic citation rate. Most buyers trust organic recommendations more than sponsored placements. Paid ChatGPT ads are a separate channel decision from the citation playbook in this post.