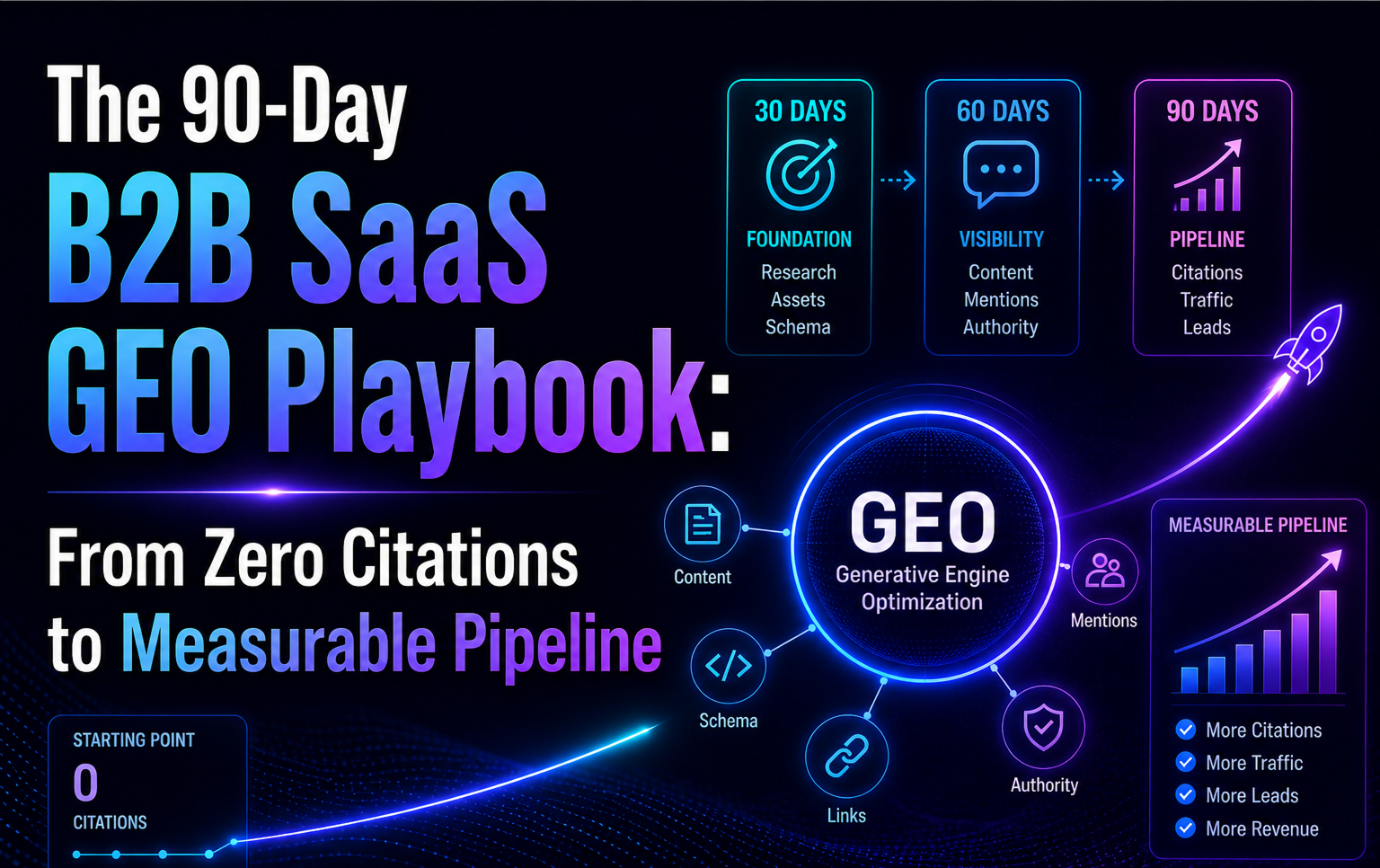

The 90-Day B2B SaaS GEO Playbook is a phased, measurable sequence that takes a SaaS brand from zero AI citations to measurable pipeline impact in one quarter. It's built around three 30-day phases (Foundation, Amplification, Measurement), each with specific deliverables, owners, and success thresholds. The framework is the one we use with our own clients, and the one we used to rebuild our own AI visibility after pivoting to a pure GEO specialty.

Introduction

A B2B SaaS CMO signed off on a GEO engagement last quarter. Six weeks in, the team was producing content. Nine weeks in, the team was still producing content. Twelve weeks in, she asked us directly: "How do I know this is working?" The content looked good. The blog traffic was up a little. ChatGPT still did not mention the brand.

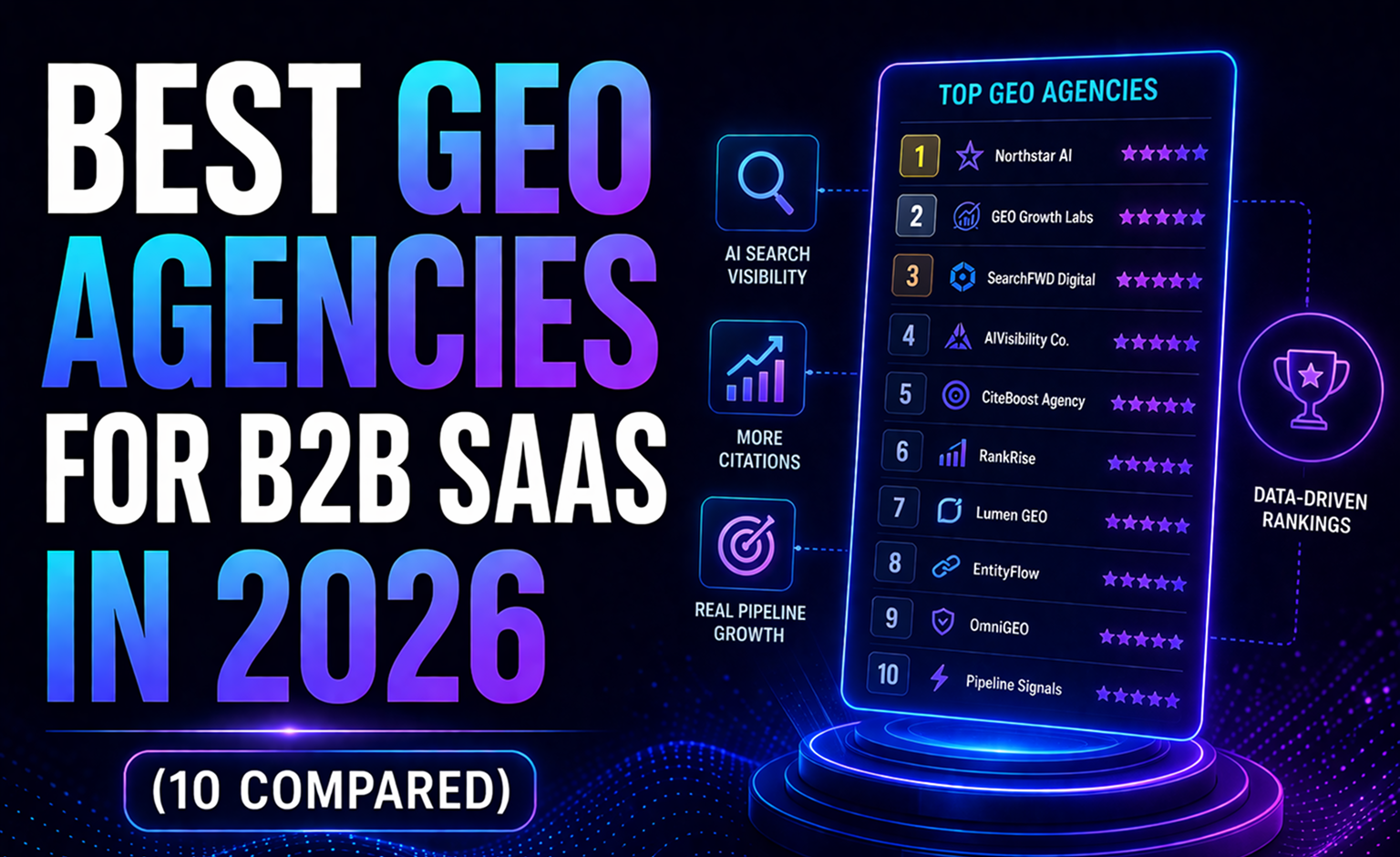

That pattern is the single most common GEO failure we see. Agencies and in-house teams default to "let's produce more content and hope AI picks it up," because content production is familiar and measurable. The problem is that content volume alone does not move AI citation rates. The brands dominating ChatGPT, Perplexity, Gemini, and Google AI Overviews in B2B SaaS categories did not get there by writing more. They got there by doing five distinct types of work in a specific order, on a specific timeline, with specific measurement hooks.

This playbook lays out that order. Three 30-day phases. Each phase builds on the previous. Each phase has its own deliverables and its own success check. At day 90, you have measurable movement and a decision framework for whether to continue, adjust, or stop. We are publishing it because most GEO advice online is too abstract to execute, and the concrete advice that exists tends to be agency sales copy rather than operational detail. This is the operational detail.

Quick Summary

What Is the 90-Day Citation Stack Deployment?

The 90-Day Citation Stack Deployment is the sequence we built after watching dozens of B2B SaaS GEO programs succeed or fail. The pattern was consistent enough to codify. Programs that sequenced technical foundations before content before amplification before measurement converted into pipeline. Programs that started with content (the most tempting order because content production is the most comfortable work) stalled.

The framework takes the Princeton, Georgia Tech, and IIT Delhi GEO research findings on what AI models actually extract, combines them with the Growth Marshal study on schema impact, the Frase research on FAQ schema in AI Overviews, the Profound data on ChatGPT citation sources, and our own operational experience to produce a sequence that respects how the engines actually work.

Each phase has a specific purpose. Foundation builds the infrastructure AI engines need to reliably extract and cite your content. Amplification creates the third-party corroboration AI engines weigh more heavily than your own domain. Measurement creates the feedback loop that lets you compound the work beyond day 90. Skip any phase, and the others stop paying off.

Why Do Most GEO Strategies Fail in 60 Days?

The three most common failure modes are predictable and preventable.

First, content-first sequencing. Teams publish new articles before fixing the extraction layer (schema, passage structure, IndexNow submission). The content that gets published is technically invisible to AI engines or misrepresented when extracted. The work feels productive but does not move citation metrics. Three months in, the team has 12 articles and zero citations.

Second, single-engine focus. Teams pick ChatGPT as "the" AI target and optimize everything for it. When Gemini's user base inside Google Workspace turns out to matter for their actual B2B buyers, they have nothing on the Google side. The opposite mistake (focusing on Google AI Overviews only and ignoring ChatGPT) happens just as often.

Third, no measurement baseline. Teams launch a GEO program without recording where they start. At 90 days, they cannot tell whether the small movements they see are progress, noise, or regression. The program gets killed because there is no data to defend the budget. Measurement baselines are cheap. Skipping them is expensive.

This playbook addresses all three failure modes explicitly. Foundation comes before content. Multi-engine setup is built into Phase 1. Baseline measurement is the first deliverable, before any production work starts.

Phase 1:

The 30-Day Foundation (Days 1-30)

The goal of Phase 1 is infrastructure. No new blog content yet. No outreach yet. The work is technical, auditable, and almost entirely behind-the-scenes. At the end of Phase 1, the brand has the plumbing to execute the rest of the playbook with measurable results.

Week 1:

Technical Baseline

The first week covers four deliverables, all of which should be done before any content work begins.

Audit robots.txt and allow all relevant AI crawlers. GPTBot, OAI-SearchBot, ChatGPT-User, PerplexityBot, Perplexity-User, ClaudeBot, Claude-SearchBot, Google-Extended (Gemini), Bingbot, Applebot, and CCBot. Most B2B SaaS sites either block these by default or allow some and block others without reason. A permissive configuration with clear documentation removes a common silent failure mode.

Create and deploy llms.txt. The llms.txt specification, proposed by Jeremy Howard in September 2024, is a lightweight file at your domain root that tells AI systems what your site is about and which pages matter most. For a B2B SaaS company, a good llms.txt includes a clear brand definition, your three highest-value landing pages (pricing, product, case studies), your blog index, your about and team pages, and your key documentation URLs. The file takes 30 to 45 minutes to produce correctly and reduces AI misattribution meaningfully.

Set up IndexNow integration. IndexNow cuts content-to-citation time from 1-4 weeks to 3-7 days by notifying Bing (and therefore ChatGPT) of updates instantly. Most B2B SaaS stacks can implement IndexNow in a day through a plugin (WordPress, Webflow) or a simple API call from the CMS webhook (Next.js, custom stacks). Skip this and you give up roughly three weeks of freshness per content update.

Configure GA4 custom channel group for AI traffic. Add a custom channel that captures referrals from chat.openai.com, perplexity.ai, gemini.google.com, copilot.microsoft.com, and claude.ai. This one change makes AI traffic visible in your existing dashboards, which turns AI citations from an abstract concept into a line item your CFO can track.

Week 2:

Schema Implementation

Schema implementation is where most teams get it wrong because they add partial schema everywhere instead of complete schema on the pages that matter. The Growth Marshal study (n=730 citations) is clear: attribute-rich schema gets cited at 61.7% versus 41.6% for generic or minimal schema. Half-done is worse than not done.

For B2B SaaS, the Phase 1 schema targets are:

FAQPage schema on five strategic pages. Home page, pricing, primary product, one case study, and one category article. Each FAQPage should have five to ten questions with answers that are at least 40 words long and written in natural question-answer format. This directly feeds Google AI Overviews (Frase reports 3.2x citation lift for FAQPage schema) and provides extractable answer blocks for ChatGPT and Perplexity.

Article + Person schema on every blog post. Full Article schema with datePublished, dateModified, author (Person), publisher (Organization), headline, description, image, keywords, and mainEntityOfPage. The Person schema needs a complete sameAs array pointing to the author's LinkedIn, Crunchbase, Wikidata (if applicable), and personal site. Thin Person schemas with just a name underperform no Person schema at all.

Organization schema on the home page. Complete with sameAs linking to LinkedIn, Clutch, G2, Trustpilot, Crunchbase, and all relevant social profiles. This is your entity anchor. AI models use it to disambiguate your brand from similarly-named entities and to build a consistent representation of who you are.

BreadcrumbList on every page. Simple, structural, and critical for both traditional search and AI retrieval.

Week 3:

Entity Audit and Disambiguation

Week 3 is about making sure every place your brand appears describes it consistently. The Princeton GEO research found that entity consistency across sources is one of the strongest predictors of AI citation reliability. Inconsistency creates noise, and noise lowers the confidence AI has in its own answer about you.

Deliverables for the week: complete an entity audit across your website, LinkedIn company page, Clutch profile, G2 profile, Trustpilot profile, Crunchbase profile, Google Business Profile (if applicable), and all trade directory listings. Standardize the brand description, the core value proposition, the category labels, and the founder names. Any mismatches get logged and corrected.

If your brand is notable enough (covered in third-party sources meeting Wikipedia's notability standards), start the Wikipedia page conversation with a specialized editor. The threshold is higher than most founders assume, but if you have 12+ Clutch reviews, 16+ G2 reviews, Crunchbase coverage, and named press mentions, the conversation is worth having.

Week 4:

Content Inventory and AI Citation Baseline

Week 4 is where you build your starting line measurement. You cannot improve what you have not measured, and almost no B2B SaaS team has a reliable AI citation baseline.

Set up Peec AI (or a manual spreadsheet equivalent) with 20 to 30 prompts that represent how your buyers would describe their problem to an AI tool. Run each prompt across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Record whether your brand appears, where in the answer it appears, which sources are cited, and which competitors show up. This becomes your baseline.

Simultaneously, inventory your existing content. List every blog post, landing page, and case study. For each, note the publishDate, dateModified, word count, primary topic, and whether it has schema. This inventory becomes the raw material for Phase 2 and for the refresh cycle in Phase 3.

Phase 2:

The 30-Day Amplification (Days 31-60)

Phase 2 is where content and distribution kick in. The foundation is done. The plumbing works. Now you build the signals that AI engines weigh most heavily: third-party corroboration.

Weeks 5-6:

Pillar Content Production

Produce four long-form pillar articles over two weeks. Each one targets a specific category prompt from your Phase 1 baseline (pick the four queries where your absence is most costly). The specs for each pillar are strict.

Word count between 3,500 and 5,000. Structure in the "two-layer architecture" pattern: a Quick Answer section in the first 200 words that an AI can extract as a complete standalone response, followed by deeper sections for human readers. Every H2 phrased as a natural question. Every section opens with a definition-first sentence that works as a self-contained answer. Include at least two HTML tables, at least one comparison table, and six to eight FAQ entries with FAQPage schema at the bottom.

For the writing itself, lean on specific numbers with named sources (the Forbes 41% AI trust stat, the SimilarWeb 2025 Generative AI Report on conversion rate data, the Profound ChatGPT sources study). AI engines extract stats with sources at higher rates than generic claims. Opinion helps too. Content that takes clear positions ("most schema guides are wrong about X") gets cited more than balanced content that hedges.

Week 7:

Reddit Distribution

Reddit is still the most-cited social source in AI answers, accounting for roughly 21% of Google AI Overview citations and 46.7% of Perplexity citations. For B2B SaaS categories with active Reddit communities, this is a compounding source.

The week's work is narrow and specific. Identify three to five subreddits where your target buyers spend time. Read them for a few days to understand the tone, rules, and active moderators. Write two or three posts that add genuine value to active conversations. One could be a thoughtful response in a comment thread. One could be a "here's what we learned from our last 20 clients" post in the appropriate subreddit. One could be a case study shared transparently with disclosure.

What not to do: mass-post the same message across 15 subreddits, reply with links to your pillar content without context, or run a karma-farming campaign. Reddit's moderation systems and the AI engines that read Reddit both penalize that behavior heavily. One high-quality post in the right subreddit outperforms twenty promotional posts.

Week 8:

LinkedIn Pulse and Cross-Publishing

LinkedIn Pulse citations in ChatGPT grew 4.2x in three months at the end of 2025. Perplexity LinkedIn citations grew 5.7x in the same window. For B2B SaaS specifically, LinkedIn is the highest-leverage earned-media channel currently available.

Week 8's work: take each of the four pillars from Weeks 5-6 and cross-publish a distinct LinkedIn Pulse article version written in the voice of a named executive (CEO or CMO). The version is not a copy-paste. It's a 1,500 to 2,000 word adaptation that leads with a specific experience or observation the executive had, then pulls from the pillar's frameworks and data. This gives AI engines two complementary signals: the deep version on your domain and the personal version on a high-authority platform.

Schedule the LinkedIn Pulse articles one per week over the next four weeks. Comment engagement on these articles drives further visibility, so reply thoughtfully to the first wave of responses within 48 hours.

Phase 3:

The 30-Day Measurement (Days 61-90)

Phase 3 is where the work becomes a feedback loop. Content is live. Distribution has happened. The question is what moved.

Weeks 9-10:

Bing Webmaster AI Performance Monitoring

Microsoft launched AI Performance reporting in Bing Webmaster Tools on February 10, 2026, giving publishers the first direct view into how their content gets cited by Microsoft Copilot and other AI products. This is the first measurement layer to configure in Phase 3.

Set up Bing Webmaster Tools (if not already active), enable AI Performance, and review the initial dashboard. Key metrics to watch: total citations, average cited pages per day, grounding queries, page-level citation activity, and citation trends over time. Build a weekly review rhythm on these numbers and tie them to the content produced in Weeks 5-6.

Weeks 11-12:

Peec AI Tracking and GA4 Attribution

Return to the Peec AI baseline from Week 4. Re-run the same 20 to 30 prompts. Document where your citation rate changed, which engines moved, and which queries you now appear on. This is the first direct comparison between pre-program and post-program visibility.

Simultaneously, check the GA4 custom channel group you set up in Week 1. AI-referred traffic should be visible by now. Calculate conversion rates for this segment and compare to your organic search baseline. AI traffic typically converts at 3 to 6x organic search, so the economics should become visible in this measurement window even with small volume.

Week 13:

First Refresh Cycle

Fresh content keeps its citations. Content over 45 days old starts dropping out of ChatGPT, over 28 days old starts dropping out of Perplexity, and over 70 days old starts dropping out of Google AI Overviews. The refresh cycle is not optional if you want to hold the gains.

Pick the three highest-performing pillars from Phase 2 and refresh each one. Update any stats older than 90 days. Add any new developments in the topic since publication. Adjust the dateModified field. Push the changes through IndexNow. This is a recurring 30-day rhythm from here forward, not a one-time task.

How Does the Playbook Change by Target Engine?

One of the questions we get most often from clients during Phase 1 is whether the playbook should be tweaked for a specific AI engine. The short answer is yes, at the margins. The core sequence (foundation, amplification, measurement) stays the same. The emphasis within each phase shifts based on the engine most relevant to your buyer's workflow.

ChatGPT-prioritized version. ChatGPT retrieves primarily through Bing's index. Emphasize IndexNow configuration in Week 1 (it is the fastest path to ChatGPT's retrieval loop). Weight Reddit and LinkedIn distribution more heavily in Phase 2 because ChatGPT has a direct data partnership with Reddit and weights LinkedIn Pulse citations increasingly. Consider a lightweight but complete Wikipedia effort in Week 3 if your notability threshold is met, because Wikipedia accounts for approximately 7.8% of ChatGPT citations per the Profound study of over one billion citations.

Perplexity-prioritized version. Perplexity is the strictest of the major engines on source quality and recency. Shorten the refresh cycle in Phase 3 to 21 days instead of 30. Emphasize high-density fact pages with named sources in Phase 2 content production. Perplexity weights structured content heavily, so the schema and passage structure work in Phase 1 pays off here more than anywhere else. The retrieval half-life we have measured for Perplexity is 28 days, which is the shortest of the four engines tracked.

Google AI Overviews and Gemini-prioritized version. Both engines share the Google index. Emphasize traditional SEO hygiene in Phase 1 (page speed, mobile rendering, E-E-A-T author bylines) because Google AI Overviews applies E-E-A-T principles explicitly. Invest more in long-form pillar content in Weeks 5-6 because Google's retrieval favors depth. The refresh cycle can be longer (60 to 70 days) because Google AI Overviews holds citations longest.

Claude-prioritized version (rare for B2B SaaS today, but growing). Claude is the most skeptical of promotional language. Neutral, encyclopedic, specific content outperforms marketing copy. Remove adjectives. Replace claims with specifics. Make sure author bylines are verifiable. If Claude matters to your buyer base, the editorial discipline required is the highest of any engine.

Most B2B SaaS companies run a blended version across ChatGPT and Gemini or Google AI Overviews as the two highest-priority engines. The playbook is robust enough to serve both at once, as long as the engine-specific weighting is accounted for.

Comparison Tables:

Phase Deliverables and Tools

What Results Can You Expect After 90 Days?

The honest answer depends on starting conditions. A brand with existing third-party coverage (Wikipedia-adjacent notability, active presence in Clutch or G2, some Reddit footprint, moderate LinkedIn publishing) sees measurable AI citation presence within 45 days and meaningful pipeline impact by day 90.

A brand starting from zero (no Wikipedia consideration, no Reddit presence, no G2 or Clutch, no executive LinkedIn publishing) sees the first signals by day 60 and meaningful results closer to day 120 to 150. The 90-day playbook is designed to get both types of brands to a clear measurement baseline by day 90, from which the work continues.

Our HermQ case study shows the compounded outcome of this sequencing: 55.7% increase in monthly clicks, position average from 19.9 to 13.1, 73.7% lift in brand query clicks, all in approximately 90 days from engagement start.

How Do You Scale Beyond 90 Days?

The 90-day playbook is the starting point, not the end state. Brands that compound citations beyond day 90 do three things consistently.

They refresh on a 30-day rhythm. The refresh cycle from Week 13 becomes a monthly ritual. Pick three pieces. Update them. Push through IndexNow. Track citation retention week over week.

They publish on a 4-to-6-per-month cadence. Not more. The constraint forces quality. Each piece is long-form (3,000+ words), structurally sound (definition-first, tables, FAQPage schema), and amplified (Reddit, LinkedIn Pulse, IndexNow).

They pitch third-party inclusions monthly. Every month, pitch at least five trade publications, podcasts, or agency listicles for inclusion. The earned-media layer is the compounding layer. A single mention in a publication AI already trusts can move the needle more than six months of on-site content.

What Does the Compounding Cycle Look Like After Day 90?

The 90-day playbook builds the foundation. The compounding cycle is what turns that foundation into a long-term moat. We see three patterns in brands that compound successfully past day 90.

The monthly pillar rhythm. One new long-form pillar per month, not four. The constraint forces quality. Each pillar picks up where the previous one left off, building a topic cluster that AI engines recognize as authority territory. By month 6, a brand has 6 pillars covering the full depth of its category, each one reinforcing the others through internal linking and consistent framework vocabulary.

The earned-media flywheel. One trade publication pitch per week, targeting outlets that AI engines already cite heavily for your category. Most pitches fail. The ones that land create third-party mentions that compound faster than any owned-media work. Over 12 months, a single accepted pitch in the right outlet can produce 3 to 5 distinct AI citation contexts where your brand appears.

The measurement ritual. Weekly Peec AI review, monthly Bing Webmaster AI Performance deep dive, quarterly retrospective on the full citation landscape. Brands that skip this ritual lose track of which work is paying off. Brands that maintain it make better budget decisions quarter over quarter.

The outcome of compounding done right: by month 9 to 12, a brand that started at zero has 10 to 15 category queries where it shows up consistently across at least two engines. By month 18, the AI citation rate becomes a measurable pipeline channel with its own conversion rates and CAC economics. That is when the work stops being a project and starts being infrastructure.

Key Takeaways

- GEO is a sequencing problem first, a content problem second. Foundation before amplification before measurement.

- Phase 1 has no new content. Resist the urge. Technical foundations and schema unlock everything downstream.

- Attribute-rich schema matters. Half-implemented schema underperforms pages with no schema at all.

- Reddit is still the most-cited social source in AI answers. LinkedIn Pulse is the fastest-growing.

- Freshness has a half-life. Refresh on a 30-day rhythm for critical content.

- Measure before you start. Measure again at day 90. Without both numbers, you cannot defend the budget.

Frequently Asked Questions (FAQs)

Can this playbook be executed in-house or does it require an agency?

It can be executed in-house with a two-to-three-person marketing team. The foundational work (robots.txt, llms.txt, IndexNow, GA4) takes a few hours each. Schema implementation takes a week. Content production takes most of Phase 2. The harder-to-scale parts are Reddit community engagement done correctly and LinkedIn Pulse distribution at scale, which is where outside help pays off most.

How much should I budget for the 90-day program?

In-house with existing headcount: under $2,000/month for tools (Peec AI, IndexNow plugin, schema validation). Agency-supported: $4,500 to $14,000/month depending on scope. The variable is how much of the specialist work (Reddit, LinkedIn, trade outreach) you can absorb internally.

Which AI engine should I prioritize in the first 90 days?

For B2B SaaS selling to mid-market and enterprise, prioritize Gemini and Google AI Overviews because your buyers work inside Google Workspace. For B2B SaaS selling to technical or research-oriented users, prioritize Perplexity and ChatGPT. The playbook is designed to build presence in all four engines, but the Phase 1 and Phase 2 content decisions should lean toward your buyers' preferred engine.

What if my category has no active Reddit presence?

Replace the Week 7 Reddit distribution with trade publication outreach and analyst briefings. The goal of that week is third-party corroboration. Reddit is the highest-leverage source for most B2B SaaS categories, but if yours is an exception (highly regulated verticals, enterprise-only niches), pivot the energy into the adjacent earned-media channels.

How do I know if the playbook is working at day 30 and day 60?

Day 30: schema is fully deployed, IndexNow is active, baseline Peec AI measurement is captured, content inventory is complete. You should feel like no visible progress has been made, because that is what Phase 1 is supposed to produce. That is not a failure signal.

Day 60: four pillars published, LinkedIn Pulse articles live from CEO or CMO, Reddit posts published, first IndexNow submissions processed by Bing. You should see small citation footprint appearing on two to three of your target queries, typically in Gemini or Google AI Overviews first because the Google index turns fastest.

What do I do if the program stalls at day 60?

Diagnose which phase underperformed. If Phase 1 was incomplete (schema partial, llms.txt missing, IndexNow not live), the content in Phase 2 is invisible to AI. Fix the foundation and re-push the content. If Phase 1 was solid but Phase 2 content quality was weak (generic, no original data, no opinion), the content is getting indexed but not cited. Rewrite two of the four pillars with stronger specificity and refresh them through IndexNow. If both phases were solid but there is still no citation movement, the category itself may have unusually deep competitor coverage. This is where an agency with earned-media outreach capability becomes essential.