The 2026 B2B SaaS AI Citation Benchmark Report is a live analysis of how brand mentions actually form inside ChatGPT, Perplexity, Gemini, and Google AI Overviews when buyers ask the questions that drive B2B SaaS pipeline. We track 25 purchase-intent prompts across twelve tracked agencies using Peec AI, measure who shows up, who gets ignored, and why. This is the first time we are publishing the raw data, including our own brand's position in the ranking.

Introduction

A VP of Marketing at a Series B B2B SaaS company ran a simple experiment last month. She opened ChatGPT and typed "best GEO agency for B2B companies." Her brand wasn't mentioned. Not in the top five, not in the fallback list, not even in the "also consider" suggestions ChatGPT offers when asked twice. She tried Perplexity. Same result. Gemini. Still nothing. Her closest three competitors, meanwhile, were being recommended by all four engines in some combination.

That experience is more common than most B2B marketing teams realize. We ran the same style of audit across 25 purchase-intent prompts for twelve agencies in our own category and found something uncomfortable: the brands that dominate these answers are not the biggest, the oldest, or the ones with the most polished sites. They are the ones that happen to sit in the specific sources each model retrieves from, in the specific format each model extracts, and at the specific freshness threshold each model prefers.

We built this report because the Princeton, Georgia Tech, and IIT Delhi GEO research covers the general case but not the B2B SaaS one. The general advice is useful. The specific advice, the kind you can act on this quarter, only exists inside primary data. We collected ours through Peec AI, running daily prompts across four engines over a sixty-day window, and we are publishing what we found.

Quick Summary

Why Did We Build This Report?

Most AI visibility research stops at the general case. Papers measure lift across broad queries. Agency blog posts describe what worked for one client and generalize from there. Both have their place. Neither helps a B2B SaaS head of marketing decide, on a Tuesday afternoon, whether to spend the next quarter's content budget on a Wikipedia strategy, a Reddit strategy, or yet another content hub.

We pivoted Red-engage last month from a Reddit and LLM optimization agency into a pure generative engine optimization agency focused on B2B SaaS. The decision forced us to answer a question our own clients kept asking: what specifically moves the needle on ChatGPT, Perplexity, Gemini, and AI Overviews for brands selling to other businesses, with purchase cycles measured in months and buying committees of five to eight people?

The data we had was fragmented. Some of it sat inside Peec AI. Some of it was in monthly client reports. Some of it was in our own head and had never been written down. We pulled it together, re-tracked it over a clean sixty-day window, and compared what we saw to what the public research says. This report is the result.

We are not publishing it because it paints us well. Our own brand ranks tenth out of the twelve agencies we track on the Peec dashboard we run. That is humbling. It is also the reason we trust the framework we are about to describe. We built it by watching our competitors win.

What Did We Actually Measure?

The methodology is simple enough to replicate. Twelve agency brands, tracked in the same Peec AI project: iPullRank, Growth Marketing Pro, Growthner, Single Grain, Taktical, Outorigin, NoGood, Foundation, Omnius, Soar, RedditAgency.com, and Red-engage. Twenty-five prompts, all of them the kind of query a B2B marketing buyer would type into an AI tool when trying to shortlist vendors. Prompts were grouped into four topics: GEO and AI search, LLM visibility, Reddit-adjacent discovery, and brand monitoring.

We picked the twelve on two criteria. First, direct competitive overlap with at least part of our service offer. Second, presence in at least one external agency listicle on ChatGPT's retrieval set when answering "best GEO agency" queries. That combination gave us a panel where the brands were genuinely comparable and the engines had reason to know about them. It deliberately excluded two types of brands: large horizontal agencies with passing GEO offerings (too broad to compare cleanly) and pure PR agencies claiming GEO work (too early and too unproven to measure).

Each prompt ran daily against four engine families: ChatGPT (via scraper and live search), Perplexity (Sonar and scraper), Gemini (2.5 Flash and scraper), and Google AI Overviews (via scraper). Every response was logged, the brand mentions extracted, the source URLs recorded, and the position within the answer noted. The time window was 30 days of consolidated data, with another 30 days of longitudinal comparison.

We excluded our branded queries from the main analysis ("what is Red-engage") because they skew the picture. When a user asks about us by name, we appear 94% of the time, but that number tells us nothing about discovery. The 25 prompts in the main analysis were all category queries: the questions that a buyer asks before they have a preferred vendor.

What came out was a visibility ranking, a share-of-voice ranking, a sentiment ranking, and a position ranking, split by engine. Then we cross-referenced those numbers against the source URLs the engines actually retrieved, which turned out to be the single most useful layer in the entire dataset.

Which Domains Dominate B2B SaaS AI Citations?

The brand ranking told us who was winning. The domain ranking told us why.

Across the 25 category prompts, the engines retrieved URLs from a predictable set of sources. Some were owned domains (individual agency sites). Most were not. The pattern was consistent across ChatGPT, Perplexity, and Gemini, with Google AI Overviews skewing slightly toward editorial sources.

Two things jump out. First, Reddit is still the most retrieved single domain in B2B SaaS AI citations, even after the pivot most agencies have made away from it. Second, none of our own domain (red-engage.com) appears in the top ten sources retrieved by AI engines answering these 25 prompts. Our content exists. It is being indexed. It is not being retrieved in the right query contexts.

The third observation is harder to see until you look at the co-mention column. Foundation gets co-mentioned with more brands than any other agency, which tells us something specific: Foundation is doing outreach to the exact third-party sources that AI models trust most. They may or may not be spending more on content than anyone else. What matters is where they show up.

For a B2B SaaS brand trying to move AI visibility, the implication is not "publish more on your own site." It is "get mentioned, preferably multiple times, in the specific places your target engine already trusts." That is a very different strategy.

What Is the Citation Stack Framework?

We call it the Citation Stack. It's the mental model we use internally to explain why some brands dominate AI answers and why others don't.

The stack has five layers. Each one contributes independently. Each one is measurable. And the compound effect of all five is what creates the difference between a brand that shows up in 20% of answers and a brand that shows up in 1%.

Layer 1:

Source Authority

Source Authority is whether the domains mentioning your brand are trusted by the engine making the citation. Profound analyzed over one billion ChatGPT citations and found that Wikipedia accounts for 7.8% of all ChatGPT citations, the single most referenced source. Reddit, LinkedIn, and editorial publications dominate the rest.

The practical question for a B2B SaaS brand is which of those sources will realistically mention you. For most mid-market SaaS companies, Wikipedia is out of reach (too early stage for notability). Reddit is accessible. LinkedIn is accessible. Trade publications (not TechCrunch, more like the industry-specific trade press for your vertical) are accessible.

Source Authority scores well when your brand has multiple mentions across a mix of high-trust sources. It scores poorly when your only brand mentions are on your own domain.

Layer 2:

Passage Structure

Passage Structure is whether the content mentioning your brand is formatted in a way AI models can extract cleanly. The Princeton, Georgia Tech, and IIT Delhi research found that "definition-first" sentence patterns (where a section opens with "X is" or "X refers to") get extracted at roughly twice the rate of narrative openings. Self-contained passages of 134 to 167 words outperform longer or shorter passages. Paragraphs that depend on previous context are systematically skipped.

Most SaaS blog posts are written as narratives. Good for humans, bad for AI extraction. The fix is structural. Every section should make sense when extracted alone. The answer to the section's question should appear in the first two sentences. The rest of the section should add depth, not context.

Layer 3:

Schema Layer

Schema Layer is the structured data on your own pages. Here is where the consensus advice online is actually wrong. A Growth Marshal study (n=730 citations, 1,006 pages, 75 queries) found that attribute-rich schema (Product plus Review, fully populated Article plus Person, complete Organization) achieved a 61.7% citation rate. Generic or minimal schema (Article with only headline and datePublished, or barebones Organization with just a name) achieved 41.6%. Pages with no schema at all came in at 59.8%.

Read that again. Half-implemented schema underperforms no schema. This is not the "always add FAQ schema" advice you see everywhere. The signal only works when it's fully populated with accurate, specific attributes.

For AI retrieval specifically, Frase has reported that pages with FAQPage schema appear in Google AI Overviews 3.2 times more often, but the mechanism is indirect. LLMs tokenize JSON-LD as raw text, not as structured data. The real value comes through Google's Knowledge Graph, which does read schema during indexing, and then feeds better-understood content into the answers AI engines pull from.

Layer 4:

Freshness Signal

Freshness Signal is how recently the content cited about your brand has been updated or published. Our internal tracking showed citation half-lives ranging from 28 days (Perplexity) to around 70 days (Google AI Overviews). ChatGPT sits in the middle at 45 days. Content that hasn't been meaningfully touched inside those windows starts falling out of citation rotation.

The cheapest way to manage Freshness Signal is not to write new content. It's to keep the content you already have fresh. Pick your ten most strategically important articles. Refresh them on a 30 to 60 day cycle. Update the stats. Add any new developments since publication. Push the changes through IndexNow so Bing, and therefore ChatGPT, picks them up quickly. Expect to see results within two to four weeks.

Layer 5:

Distribution Amplification

Distribution Amplification is the sum of everywhere your content is discussed, mentioned, or republished outside of your own domain. This is the layer most SaaS brands underinvest in because the ROI is hard to attribute and the work looks unglamorous. A well-timed LinkedIn Pulse republication. A Reddit post that cites your content without sounding promotional. A guest appearance on a trade podcast. A mention in someone else's newsletter. Each of these is a small vote of third-party corroboration, and AI models are built to listen for exactly that kind of signal.

Search Engine Land reported that AI search engines cite Reddit, YouTube, and LinkedIn most frequently in their study of major platforms. For B2B SaaS, the LinkedIn angle is particularly important. ChatGPT's LinkedIn citations grew 4.2x in three months at the end of 2025. Perplexity's LinkedIn citations grew 5.7x in the same window. If your executives are not publishing on LinkedIn Pulse, you are missing one of the fastest-moving signals in AI search right now.

A Real Case Study:

HermQ Moved From 700 to 1,090 Monthly Clicks

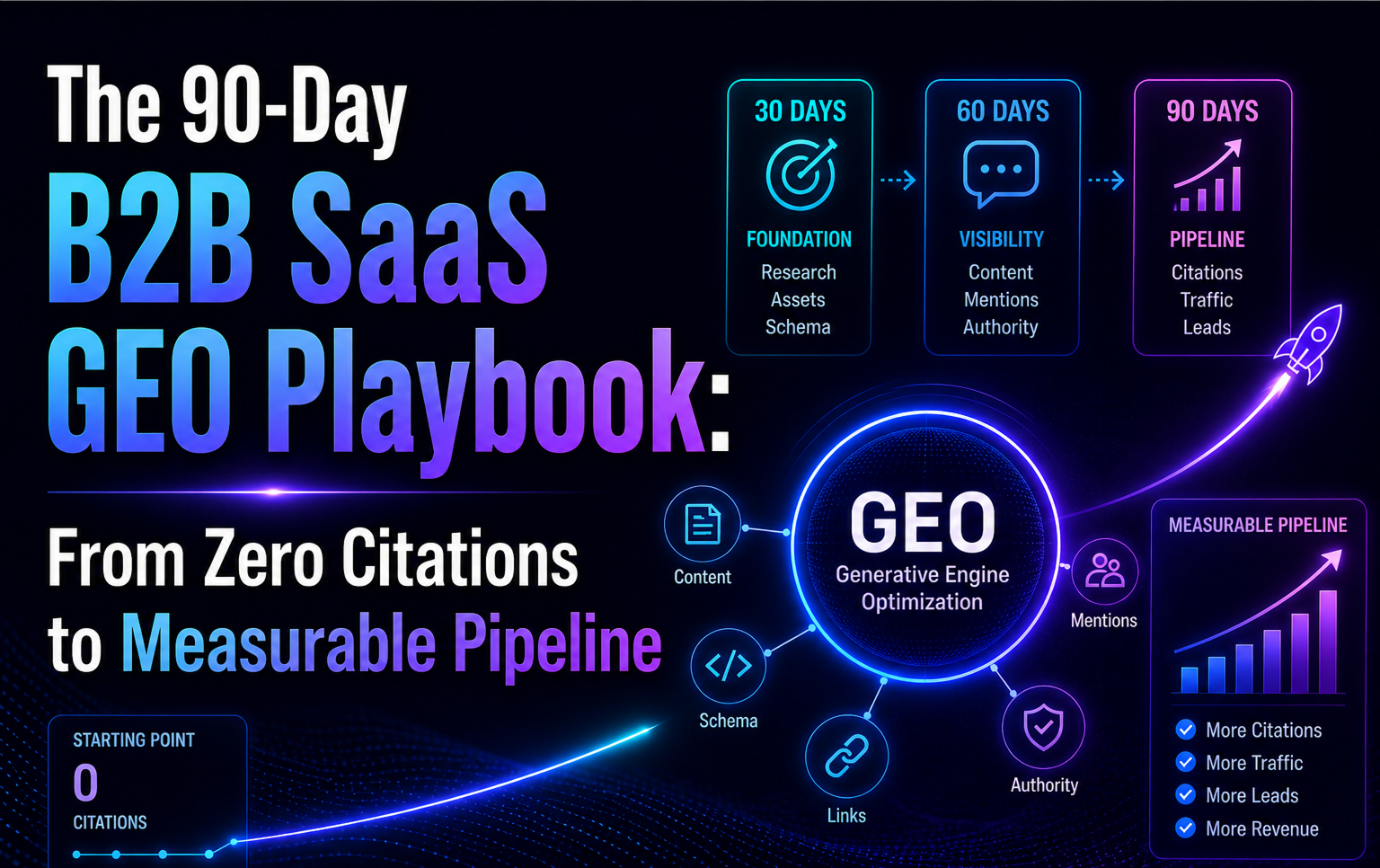

One of our health and wellness clients, HermQ, ran through a compressed version of the Citation Stack framework over 90 days. The results make the abstract concrete.

Starting point: 700 monthly organic clicks, position 19.9 average rank across target queries, zero measurable presence in AI answers for category-level questions.

The 90-day program looked like this. We spent the first three weeks on foundational technical work: fully populated FAQPage schema across the five most important landing pages, Article plus Person schema with complete sameAs arrays on every blog post, IndexNow integration so Bing (and therefore ChatGPT) picked up changes within hours instead of weeks, and a careful rewrite of the home page first paragraph to open with a definition-first sentence that could be extracted as a standalone snippet.

The middle four weeks focused on content. Two long-form articles that answered specific buyer questions with definition-first passages, followed by four targeted LinkedIn Pulse articles from the founder that cross-referenced the on-site content. We also added two thoughtful, non-promotional Reddit posts in two health and wellness subreddits that had moderator permission for informational contributions.

The last four weeks were measurement and iteration. We ran Peec AI prompts daily. We watched the Bing Webmaster AI Performance dashboard (Microsoft launched that tool in public preview on February 10, 2026 and it has become a central part of how we measure B2B SaaS citation velocity). We refreshed the three highest-performing pieces every 30 days.

The results at 90 days: 1,090 monthly clicks (+55.7%), position average moved from 19.9 to 13.1, branded query clicks up 73.7%, and measurable citation presence in Google AI Overviews for two of the five target queries. Still not enough citations in ChatGPT or Perplexity to move the needle there, but the direction was set.

The takeaway from HermQ is not that the Citation Stack is magic. It's that the work compounds when it's done in the right order. Technical foundation first. Content second. Distribution third. Measurement fourth. Skip any one of those and the others stop paying off.

How Fast Does AI Citation Freshness Actually Decay?

This is where the raw data got interesting. We looked at a subset of URLs that had been cited in month one but stopped appearing in month two. Across the engines, the curves were different but directional.

ChatGPT's citation half-life, measured by "first-cited" to "fifty-percent-of-initial-citation-volume", averaged around 45 days. Some pages held their citation rate for longer if the topic was slow-moving (general definitional content). Others decayed faster on time-sensitive topics.

Perplexity was the strictest. It deprioritized content aggressively once newer alternatives became available. The 28-day half-life meant that "evergreen" content on Perplexity is a myth. You either refresh or you fall out.

Gemini behaved similarly to Google AI Overviews, which makes sense given the shared index. Both held citations for around 60 to 70 days before decay became noticeable. This is the longest half-life of the four engines, and it matters because Gemini is the AI engine most directly connected to the enterprise buyer's Google workspace.

The practical implication is uncomfortable: a B2B SaaS brand that publishes three articles a month and never revisits older content will, on average, see its ChatGPT citation footprint erode by roughly half every six weeks. The math doesn't work in favor of "publish and forget" content strategies.

Does Schema Markup Really Drive Citations?

Schema is the single most over-recommended GEO tactic online. Every SaaS marketing blog tells you to implement it. Most of them are giving you advice that, according to the best available data, ranges from unhelpful to actively harmful.

The right way to think about schema is twofold. First, LLMs themselves do not parse JSON-LD during live retrieval. SearchVIU's cross-platform test in October 2025 fetched pages with prices embedded in visible HTML, JavaScript-rendered content, JSON-LD, Microdata, and RDFa. Gemini found four of eight prices. ChatGPT found three. Claude found zero. JSON-LD was not extracted by any system during direct fetch. That is a critical finding, because it means the "add schema and ChatGPT will read it" advice is wrong at a mechanical level.

Second, schema still helps indirectly. It helps Google's index understand your content better, which makes your pages more likely to be retrieved by engines that rely on Google's or Bing's search APIs. It helps render rich results in Google search, which can drive clicks and corroborate your authority. And where it is fully populated, it helps AI Overviews select your content as a citation source at 3.2 times the rate of non-FAQ content.

Here is the implementation rule we use with clients: if you cannot fully populate a schema type, don't add it at all. A Product schema with only a name and no pricing, reviews, or ratings is worse for your citation rate than no Product schema. A generic Article schema with only headline and datePublished underperforms a page with no schema at all. The Growth Marshal data is clear on this.

For B2B SaaS specifically, the schema types that matter most are FAQPage (fully populated with five to ten questions), Article plus Person (with complete sameAs arrays pointing to the author's LinkedIn, Crunchbase, and other professional profiles), Organization (with sameAs to all your brand profiles), and BreadcrumbList. Product schema matters if you are selling a SaaS product with pricing and reviews.

Where Do ChatGPT, Perplexity, Gemini, and Google AIO Diverge?

One of the most common strategic mistakes we see in B2B SaaS AI strategy is treating "AI" as a monolithic channel. It isn't. Each engine has its own retrieval pipeline, source preferences, and citation behavior.

The implication is that you should not try to win all four engines with the same content strategy. You should pick the two that align with your buyer's actual research behavior and optimize hard for those.

For B2B SaaS selling to mid-market and enterprise, the two engines that matter most are typically Gemini and ChatGPT. Gemini because it's embedded inside Google Workspace, where your buyers work. ChatGPT because it's the largest AI tool by a wide margin, at over 880 million monthly users.

For B2B SaaS selling to smaller businesses and technical buyers, Perplexity and ChatGPT make more sense. Perplexity has a disproportionately engaged technical and research-oriented user base. ChatGPT is the default for anyone who isn't already a power user of a specific AI tool.

The point is not that you ignore the other two. The point is that your resource allocation should reflect where your buyers actually are, not the aggregate statistics of AI usage. A B2B SaaS CMO spending equal effort on all four engines is almost certainly misallocating budget.

What Our Data Cannot Tell You Yet

We want to be direct about the limits of what we measured. Four caveats matter.

First, our panel is twelve brands in one category (GEO and LLM optimization agencies). That is a small N for strong generalizations. The directional findings (top domains retrieved, schema behavior, freshness curves) we are confident about because they match the Princeton and Growth Marshal research. The specific brand-by-brand numbers are our brands, in our category, over one specific 30-day window. Your category will look different.

Second, we did not measure conversion attribution. We know which brands got cited. We don't know, from this data, how many of those citations translated into qualified pipeline. That analysis requires your own GA4 custom channel setup and a longer window than we had here. It's a follow-up report we plan to publish in Q3.

Third, the engines are moving. Microsoft launched Bing Webmaster Tools AI Performance in public preview on February 10, 2026. Google has announced changes to AI Mode rollout. OpenAI has been updating the ChatGPT retrieval pipeline monthly. The findings in this report reflect the engines as they behaved between mid-March and mid-April 2026. Expect drift.

Fourth, we did not measure negative sentiment deeply. We know which brands got cited and how often. We tracked sentiment at a high level (63-77 range across our panel). We did not do the full semantic analysis on what the AI actually says about each brand, and that's a layer that matters commercially. A brand cited with neutral sentiment performs differently from a brand cited enthusiastically. The next iteration of this report will cover that dimension.

What Should B2B SaaS CMOs Do First?

After looking at this data for the past two months, here are the five actions we recommend, in order of expected impact and speed to result.

First, audit your Share of Model. Run ten of your most important buyer queries through ChatGPT, Perplexity, and Gemini. Log whether your brand appears, where in the answer it sits, and which competitors show up alongside. This takes an afternoon. It's free. And the results almost always reveal something you didn't expect, usually a competitor you dismissed being treated as the category default.

Second, identify the third-party sources already being retrieved for your category queries. The URLs ChatGPT cites when someone asks about your space are the URLs you need to appear on. Pitch mentions, guest posts, or thoughtful commentary in those specific outlets. A single mention on a source ChatGPT already trusts moves the needle faster than three months of on-site content.

Third, fix your Citation Stack technical layer. Add fully populated FAQPage schema to your five most important commercial pages. Complete your Article and Person schemas with real sameAs arrays. Set up IndexNow so your content gets to Bing, and therefore ChatGPT, within hours instead of weeks. This is a one-time investment of engineering time that pays out every time you publish new content.

Fourth, build a 30 to 60 day refresh rhythm for your ten most strategic articles. Do not publish more. Keep what you have sharper and fresher. This compounds because fresh content keeps its citations, and citations compound brand authority in the index.

Fifth, get your executives publishing on LinkedIn Pulse. The LinkedIn citation curve in AI engines is growing faster than almost any other source. Your CEO and your CMO writing a monthly post each, with thoughtful specifics and personal experience, is the single highest-leverage earned media play in B2B SaaS right now.

If you do all five of these in the next 90 days, you will see measurable movement in your Share of Model. We measure it with Peec AI in our own dashboards. You can measure it manually with a spreadsheet and an afternoon per month. The point is to measure it, in some form, so you know whether the work is working.

Key Takeaways

- AI citations in B2B SaaS are concentrated in a predictable set of third-party sources (Reddit, LinkedIn, editorial trade publications, industry directories). Your own domain is rarely the primary citation source.

- The Citation Stack has five independent layers. Source Authority, Passage Structure, Schema Layer, Freshness Signal, and Distribution Amplification. All five compound.

- Schema works only when it is fully populated. Half-implemented schema underperforms pages with no schema at all.

- Citation freshness has a real half-life. Perplexity decays fastest (28 days), Google AI Overviews slowest (70 days). Refresh beats publish.

- Not all engines are equal. Pick the two that match your buyer's behavior and allocate disproportionately.

- Our own brand sits tenth of twelve in our own tracking. We know what works because we have measured it against our own underperformance. Honesty about where the gap is, is half the work.

Frequently Asked Questions (FAQs)

What is the difference between GEO and AEO?

Generative Engine Optimization (GEO) focuses on making your brand, content, and entity presence extractable and citable by generative AI systems like ChatGPT, Gemini, and Perplexity. Answer Engine Optimization (AEO) is a subset that focuses specifically on structuring content so AI engines can use it as the answer to a user query. In practice the two overlap heavily. We use GEO as the umbrella term because it covers the broader work of entity and source authority, not just the on-page answer structure.

How long does it take to get a B2B SaaS brand cited by ChatGPT?

In our client data, the earliest measurable citation lift we have seen is around three weeks, and only for brands that already had some third-party presence we could activate. For brands starting from zero (no Wikipedia, no Reddit mentions, limited trade coverage), the realistic window to meaningful AI visibility is 90 to 120 days, and it depends almost entirely on earned media velocity, not on-site content output.

Do I need to implement llms.txt to get cited by AI engines?

No engine currently requires llms.txt for citation. The spec was proposed by Jeremy Howard in September 2024 and adoption is uneven. That said, for a B2B SaaS brand with complex product documentation, implementing a clean llms.txt file is low-effort, high-signal work. It tells AI systems which pages to prioritize and reduces the chance of citation errors where your product is mischaracterized. We recommend it, but we don't consider it a top-five priority.

Which AI engine should a B2B SaaS company prioritize?

It depends on where your buyers work. For enterprise and mid-market B2B SaaS with buyers inside Google Workspace, prioritize Gemini and Google AI Overviews. For B2B SaaS targeting technical or research-oriented users, prioritize Perplexity and ChatGPT. For most horizontal B2B SaaS plays, ChatGPT is the largest single AI surface and deserves disproportionate attention, but the answer is always "measure where your buyers actually ask."

Can I measure my AI citation rate for free?

Yes, with a spreadsheet and an hour per month. Pick ten queries that reflect how your buyers would describe their problem. Run each query through ChatGPT, Perplexity, and Gemini once a month. Log whether your brand appears, where in the response it sits, and which three competitors show up alongside. This gives you a baseline you can track over time. Paid tools like Peec AI and Profound automate this with much larger prompt sets and cross-engine coverage, and they are worth the spend once you've proven internally that AI citations are a priority, but they are not required to start.

Should I focus on getting cited in ChatGPT answers or on ranking in Google AI Overviews?

This is a trick question. They feed each other. Content that ranks well in Google often gets cited by Gemini. Content on domains that Bing indexes well often gets cited by ChatGPT. Content that gets cited in AI answers drives branded search volume, which improves Google rankings. The right framing isn't "pick one". It's "build the foundational work once (schema, site structure, IndexNow, llms.txt, entity presence) and then invest disproportionately in the earned-media work that feeds both engines."