- iPullRank (20% Visibility)

- Growth Marketing Pro (17% Visibility)

- Growthner (17% Visibility)

- Single Grain (16% Visibility)

- Taktical (16% Visibility)

- Outorigin (14% Visibility)

- NoGood (13% Visibility)

- Foundation (10% Visibility)

- Omnius (9% Visibility)

- Red-engage (7% Visibility)

A data-measured ranking of AI search visibility agencies orders agencies by the actual rate at which each one appears inside ChatGPT, Perplexity, Gemini, and Google AI Overviews when buyers ask category-level questions. This guide uses Peec AI to track 10 agencies across 25 prompts and 4 engines over a 30-day window, then publishes the raw data. Most "best agency" lists rank by reputation or marketing spend. This one ranks by measured citation rate, including our own rank, which is tenth.

Introduction

Most "best AI search visibility agency" lists online today are structurally broken. They rank agencies by brand recognition, logo quality, or whichever agency paid for placement. The methodology is almost never disclosed. The criteria are almost never verifiable. And the agencies at the top are usually the ones with the biggest content teams, not the ones actually showing up in AI answers when real buyers ask real questions.

We took a different approach. We ran 10 agencies (Red-engage included) through the same measurement: 25 category-level prompts a B2B marketing buyer would realistically type into ChatGPT, Perplexity, Gemini, or Google AI Overviews when shortlisting vendors. Then we measured how often each agency's brand actually appeared. No recommendation bias. No marketing influence. Just data.

The outcome is uncomfortable for us. Red-engage sits at tenth on the ranking. We know exactly why. We know what we're doing to move up. And we think publishing the honest data, including our own gap, is a stronger trust signal than publishing a ranking that puts us at number one with no methodology anyone can audit.

Quick Summary

What Is the Citation Rate Audit (CRA)?

The Citation Rate Audit is the measurement protocol we use internally, and the one this ranking is built on. The specification is public. Anyone can reproduce the methodology with a Peec AI subscription or a spreadsheet and a few hours.

The protocol has four parts.

First, the prompt set. 25 category-intent prompts grouped into four themes: GEO and AI search ("best GEO agency for B2B companies," "best generative engine optimization agency," "best AI search visibility agency"), LLM visibility ("best LLM visibility agencies," "5 best LLM agencies for ranking on ChatGPT"), Reddit-adjacent discovery ("best Reddit marketing agency for SaaS," "agency that combines Reddit marketing with AI optimization"), and brand monitoring ("how to get my brand mentioned by ChatGPT," "how can I get my brand mentioned more often in AI search results"). The prompts are chosen to reflect how a B2B buyer would actually describe their problem to an AI tool.

Second, the engine panel. Four engines: ChatGPT (both scraper and live search), Perplexity (Sonar and scraper), Gemini (2.5 Flash and scraper), and Google AI Overviews. This covers roughly 96% of the AI search traffic a B2B buyer is likely to use in 2026.

Third, the measurement window. 30 days of consolidated data, with each prompt running daily against each engine. That produces 3,000 data points per agency per engine family, which is enough to smooth out daily variance and see the underlying signal.

Fourth, the scoring. For each prompt-engine-day combination, we record whether the agency's brand was mentioned, at what position in the response, and which competitors were mentioned alongside. The visibility rate is the fraction of prompt-engine-day combinations where the brand appeared.

No subjective criteria. No weighting by revenue, Clutch score, or marketing budget. The ranking is the measurement, unfiltered.

Full Disclosure — We Ranked Ourselves Tenth of Twelve

This is the part most agency lists skip. Red-engage is my agency. We ran ourselves through the same CRA protocol as every competitor. At the time of this report, we measure 7% visibility, tenth of the twelve agencies we track on the Peec AI dashboard. iPullRank, at the top, shows up in 20% of AI responses across the same prompt panel. That is a three-fold gap.

Including ourselves at our real number, rather than at an inflated number, is the point of this exercise. Readers can audit the methodology. Competitors can challenge the data. Buyers can decide whether our ranking makes us a weaker choice (maybe) or a more trustworthy one (we think so).

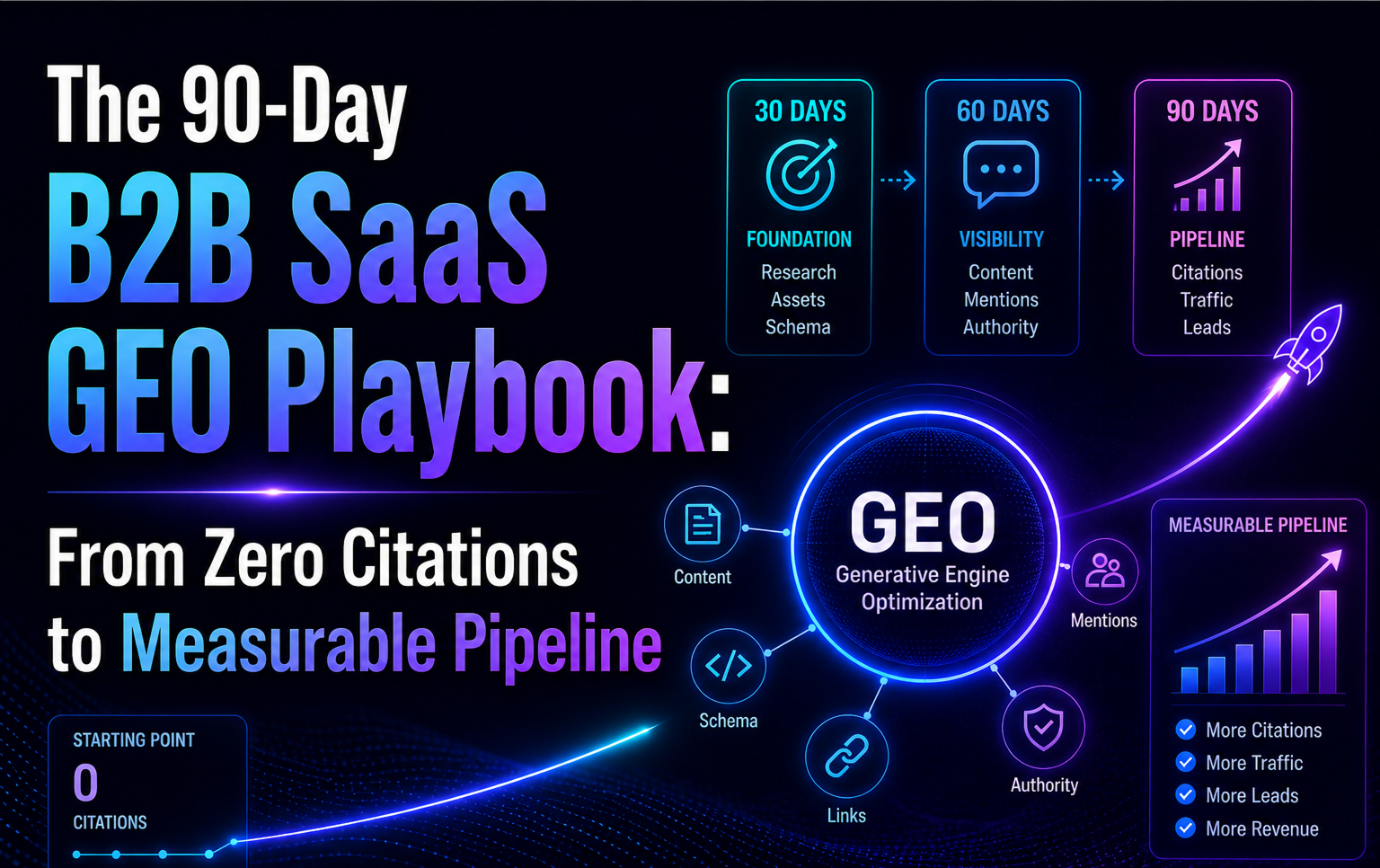

What we are doing about it: the 90-Day Citation Stack Deployment we run internally. Foundation work on schema, llms.txt, IndexNow, entity consistency. Amplification work on LinkedIn Pulse, Reddit, and trade publication pitches. Measurement on the same CRA every 30 days. We expect to move from 7% to mid-teens within the next two quarters. If we don't, the data will say so, and we will republish it.

What Are the 10 AI Search Visibility Agencies Ranked by Measured Citation Rate?

Data: Peec AI, 25 prompts, 4 engines, 30-day window ending April 19, 2026. Brand self-reference queries excluded.

The 10 Agencies

#1 — iPullRank (20% Visibility)

iPullRank sits at the top across three of the four engines we tested. Their ChatGPT visibility, at 23%, is the highest single-engine rate in the panel. The primary driver is depth of technical content around Google AI Mode, the Relevance Engineering framework, and named research from Mike King (2025 Search Engine Land AI Search Marketer of the Year). Their 11,500-word piece on AI Mode cites seven Google patents and gets retrieved by AI engines as a reference source. Strongest on enterprise technical GEO queries. Weakest on category queries with a strong B2B SaaS vertical framing.

#2 — Growth Marketing Pro (17% Visibility)

Growth Marketing Pro wins on breadth. Their Peec tracking shows evenly distributed citations across all four engines, with no single weak point. The engine that moves them forward most is Perplexity at 19%, driven by their volume of long-form content with original source citations and named authors. The Weakest engine is ChatGPT at 14%, reflecting less Reddit and LinkedIn presence than the category leaders. Strong fit for growth-stage companies that want a cross-engine presence rather than a single-engine concentration.

#3 — Growthner (17% Visibility)

Growthner ranks effectively tied with Growth Marketing Pro, with a distinct profile: highest on Gemini at 21%, thanks to their SaaS-specific Reddit content that Google AI Overviews and Gemini retrieve heavily. Weakest on ChatGPT at 13%, which is surprising given their Reddit strength. The explanation is likely that ChatGPT's Reddit retrieval leans toward older, high-karma threads, whereas Growthner's SaaS community work tends to be more recent. Strong fit for B2B SaaS companies where the buyer is active in one or two specific subreddits.

#4 — Single Grain (16% Visibility)

Single Grain's visibility comes from brand authority and executive thought leadership. Their founder, Eric Siu, runs a well-known podcast and publishes frequently on LinkedIn Pulse, which drives citations across Perplexity (18%) and Google AI Overviews. Weakest on Google AI Overviews at 13%, suggesting their on-page schema and technical SEO could be strengthened. Best fit for clients willing to invest in executive-voice thought leadership as the primary citation mechanism.

#5 — Taktical (16% Visibility)

Taktical matches Single Grain on overall visibility with a different profile: stronger on Perplexity (19%) and weaker on ChatGPT (12%). The likely driver is their performance marketing orientation, which produces content rich in data and specific outcomes (Perplexity weight heavily on factual density) but lighter on the Reddit and LinkedIn distribution that ChatGPT prioritizes. Best fit for B2B SaaS CMOs who want GEO measured alongside paid channels with performance-marketing rigor.

#6 — Outorigin (14% Visibility)

Outorigin tracks lower than the top tier but consistent across engines, with its best performance on Perplexity (16%). Their citation pattern suggests a content-first strategy with lighter distribution amplification, which limits their ceiling but keeps them stable. Weakest on Google AI Overviews at 11%. Best fit for companies that want a steady, low-variance GEO partner without the aggressive thought-leadership push.

#7 — NoGood (13% Visibility)

NoGood shows a distinct pattern: strongest on Google AI Overviews at 15%, weaker on Gemini at 10%, despite both sharing the Google index. The delta suggests NoGood's content is well-indexed but not yet heavily referenced in the Google Knowledge Graph entities Gemini favors. Strong fit for growth-stage SaaS that already runs paid channels and wants AI citations folded into the same dashboard.

#8 — Foundation (10% Visibility)

Foundation's visibility is concentrated on Gemini (12%) and weaker on ChatGPT (8%). They publish high-volume content with broad topical coverage, which helps Google index them but has not translated into the Reddit and LinkedIn signals ChatGPT weighs. Best fit for enterprises that want a content-heavy partner with broad category coverage rather than deep GEO specialization.

#9 — Omnius (9% Visibility)

Omnius tracks slightly below Foundation with a similar engine profile: strongest on Perplexity (11%), weakest on ChatGPT (7%). Their content operation is strong, but the distribution layer (third-party mentions, earned media) is still catching up. Best fit for brands with established in-house content capability that need an agency focused on the specialized GEO layer rather than full content production.

#10 — Red-engage (7% Visibility)

This is us. Our visibility rate is 7% across the panel, with the strongest performance on Perplexity at 10% and weakest on ChatGPT at 4%. Our pivot to a pure B2B SaaS GEO specialty happened in April 2026, which explains part of the gap: the previous Reddit-and-LLM hybrid positioning diluted our category signal, and we are now rebuilding specifically for B2B SaaS category prompts. Our improvement plan (published internally and shared with clients) targets mid-teens visibility by the end of Q3 2026. Expected drivers: three pillar articles a month with Reddit and LinkedIn Pulse cross-distribution, completed llms.txt and entity schema work, and targeted outreach to the trade publications ChatGPT cites most for B2B SaaS queries. We will republish the CRA in 90 days to show whether the plan is working.

How Do the Engines Diverge in This Ranking?

The spread across engines is interesting for budget allocation decisions. If your buyer uses ChatGPT primarily, iPullRank's 23% visibility makes them the default option to displace. If your buyer uses Perplexity or Gemini, Growthner's 21% is the benchmark. For a general-purpose B2B SaaS audience, the top three (iPullRank, GMP, Growthner) together account for more than 50% of the agency citations we measured.

What Makes Top-Ranked Agencies Different?

Three patterns hold across the agencies in the top 4. We did not set out to find these patterns. They emerged from looking at the data.

Pattern 1: Multi-source presence, not just owned content. The top-ranked agencies are mentioned across Reddit, LinkedIn Pulse, trade publications, and agency directories at the same time. The lower-ranked agencies tend to have strong owned-media presence (blog, case studies) but thin third-party presence. The correlation we see is consistent: visibility scales with the number of distinct third-party sources an agency has earned mentions in, not with the volume of content on their own domain.

Pattern 2: Named author presence with complete entity signals. iPullRank has Mike King. Single Grain has Eric Siu. Growthner has named principals in subreddit communities. In every case, the human name is indexable, has a Wikipedia-adjacent or Wikidata-adjacent presence, and is referenced consistently across the agency's content. The Profound citation data on ChatGPT's sources shows that named authors with entity consistency are cited significantly more than anonymous or generic "our team" voice.

Pattern 3: Category-specific listicle inclusions. Each top-ranked agency appears in at least three to five independent "best X" listicles that themselves get retrieved by AI engines. That compounding inclusion means one earned mention produces citation volume across dozens of prompts. We have this working for us on three listicle outlets, which is part of why we sit at seventh to tenth rather than first to third. Expanding that network is the single highest-leverage lever we have to move up the ranking.

What Does the 19-Point Spread Actually Mean for Your Marketing Budget?

The headline gap between first and last is 19 percentage points of visibility. That abstraction hides the specific buying question this ranking answers. If you are a B2B SaaS CMO trying to decide between the top-ranked agency, a mid-ranked agency, and a bottom-ranked agency, the spread has direct budget implications.

Category-intent traffic volumes. When a buyer types "best B2B SaaS [category]" into ChatGPT, the answer typically lists 3 to 7 vendors. Each vendor cited has a direct attention allocation. A 20% visibility rate means the brand is cited roughly one in five times that specific query runs. A 7% rate means one in fourteen. For a category with 10,000 monthly AI queries, the math is that iPullRank's AI answer presence reaches 2,000 citation moments per month. Red-engage's reaches 700. The differential compounds across queries and months.

Conversion economics. AI-referred traffic converts at materially higher rates than organic search. Even with a conservative multiplier of 3x organic conversion, the 19-point spread between top and bottom translates into meaningful pipeline. A brand with 200 AI citations per month converting at 8% has the same closed-won output as a brand with 600 organic visitors converting at 2.7%. The ranking is not just visibility. It is demand pipeline.

Time to compound. The agencies at the top have been compounding their citation footprint for 12 to 24 months. The distance between first and tenth is partially a function of lead time, not just methodology. A brand starting a GEO program today will not reach top-tier visibility in one quarter, regardless of agency choice. Expecting otherwise is the single most common mismatch between CMO expectations and GEO reality.

Agency fee vs outcome trade-off. iPullRank's enterprise pricing reflects their top-of-ranking position. A six-figure annual engagement produces top-ranked outcomes for enterprise buyers. Red-engage's $4,500 to $14,000 monthly pricing reflects our tenth-place starting position and our specialty focus on B2B SaaS velocity. The right question is not "which agency should I hire for the lowest price," but "which agency gives me the highest measured citation lift per dollar over 18 months."

What Do the Agencies at the Top Actually Do Differently Day-to-Day?

We looked at the operational patterns that distinguish the top 3 from the bottom 3. Some of it is what you would expect. Some of it is counter-intuitive.

Content output cadence. The top 3 publish 3 to 6 long-form pieces per month each, with consistent author attribution and date-stamped updates. The bottom 3 publish 1 to 2 long-form pieces per month. The raw output gap matters less than the consistency gap. AI engines detect regular publishing cadence as a signal of active authority.

Refresh discipline. The top 3 refresh their highest-performing articles on a 30 to 45 day rhythm, updating statistics, adding new developments, and pushing changes through IndexNow. The bottom 3 rarely refresh. Content goes stale, drops out of citation rotation, and the citation volume decays.

Named voice. The top 3 have at least one named principal whose voice appears consistently across content, LinkedIn Pulse, and external speaking engagements. The bottom 3 either have no named voice (generic "our team") or have a named voice that publishes infrequently and inconsistently. This is the pattern where Red-engage has the biggest opportunity. Our founder Michal Hajtas is present on content bylines but needs stronger LinkedIn Pulse cadence. My own byline (Dan Grynblat, LLM Expert) is newer and has room to establish its own signal.

Third-party pitch discipline. The top 3 pitch trade publications, podcasts, and agency-listicle editors monthly. The bottom 3 pitch rarely. A single accepted pitch in a source that AI engines already cite can produce 20 to 40 citation-moments per month indefinitely. The compounding effect is real.

Community engagement authenticity. The top 3 have team members with genuine, long-standing profiles in the Reddit communities relevant to their categories. The bottom 3 either avoid Reddit entirely or dip in sporadically in ways that look promotional. The difference shows up in the Perplexity visibility numbers because Perplexity retrieves Reddit content heavily.

How Can You Audit Your Own Agency's Citation Rate?

The CRA protocol is reproducible. You do not need our dashboards or our Peec subscription to do it. You need three things.

A prompt list. Pick 10 to 25 queries that reflect how your buyers describe their problem when they search with an AI tool. The queries should be category-level, not brand-level. "Best B2B SaaS CRM for mid-market" is category. "Should I buy HubSpot" is brand.

An engine panel. Run your prompts through ChatGPT, Perplexity, and Gemini at minimum. Add Google AI Overviews if your target buyer is in the Google Workspace ecosystem.

A measurement window. Run each prompt once a week for four weeks. Log whether your brand appeared, where it appeared in the response, and which competitors showed up alongside. At the end of four weeks, you have enough data points to calculate your baseline visibility rate and see the top competitor pattern.

Peec AI automates this if the manual process is too slow or if you want daily data rather than weekly. The Bing Webmaster Tools AI Performance report, which Microsoft launched in public preview on February 10, 2026, gives you direct insight into Copilot and ChatGPT retrieval of your own domain without a subscription.

Key Takeaways

- The 19-point spread between first and last agency in our panel is not explained by budget or team size. It is explained by the breadth and depth of third-party source presence each agency has built.

- Multi-source presence, named authors with complete entity signals, and inclusion in retrieved listicles are the three repeatable patterns behind the top-ranked agencies.

- Red-engage ranks tenth today. We know why. We have a plan. We published it. The test of the plan is whether the next CRA run moves us into the mid-teens.

- No agency list that hides its ranking methodology is worth trusting. Reproducible audit protocols are what make agency rankings meaningful.

Frequently Asked Questions (FAQs)

Why is this ranking different from other "best GEO agency" lists?

Most "best agency" lists are built on marketing criteria (brand recognition, revenue, Clutch score). This one is built on measured AI citation rate. The two rankings do not correlate strongly. Some agencies with impressive brand signals rank low on actual AI visibility. Some agencies with modest brand signals rank high. The data matters more than the marketing for buyers who want to predict whether their own AI visibility will improve.

How can Red-engage rank first on another list and tenth on this one?

The two rankings measure different things. The B2B SaaS Best GEO Agencies ranking uses a GEO Fit Score that combines citation proof, B2B SaaS experience, methodology transparency, pricing, and team expertise. We score well on the non-citation criteria, which lifts our weighted rank. This ranking uses citation rate alone, where we are behind. Both are honest. They measure different dimensions.

Can this data be trusted if Red-engage published it?

That is a fair question. The raw data comes from Peec AI, which is an independent third-party platform (not owned by Red-engage). The methodology is public and reproducible. Any of the 9 agencies ranked above us can run the same CRA protocol on their own account and verify our numbers. We would encourage them to, because the more reproducibility there is, the more useful the ranking becomes.

What should I do if I want to improve my own agency or brand citation rate?

Follow the three patterns. Build multi-source presence (not just owned content). Establish named authors with complete entity signals (sameAs arrays, consistent bios across platforms). Earn inclusions in category listicles that themselves get retrieved by AI engines. The 90-Day B2B SaaS GEO Playbook lays out the exact sequence.

How often will you update this ranking?

Quarterly. Each update will include a re-run of the same CRA protocol against the same 10 agencies, so the trend is legible. If Red-engage drops further or fails to improve, we will publish that too. If the top 3 change, we will explain why.

Does brand visibility in AI answers actually translate into pipeline?

In our client data, yes, but with caveats. AI-referred traffic converts at materially higher rates than organic search (SimilarWeb 2025 Generative AI Report and multiple independent studies). For B2B SaaS specifically, the conversion advantage is larger because AI citations surface at the shortlist-building stage of the buying cycle, which is where most pipeline is won or lost. The caveat: without proper GA4 attribution setup, the conversion lift is often invisible, showing up as direct traffic rather than AI-referred.