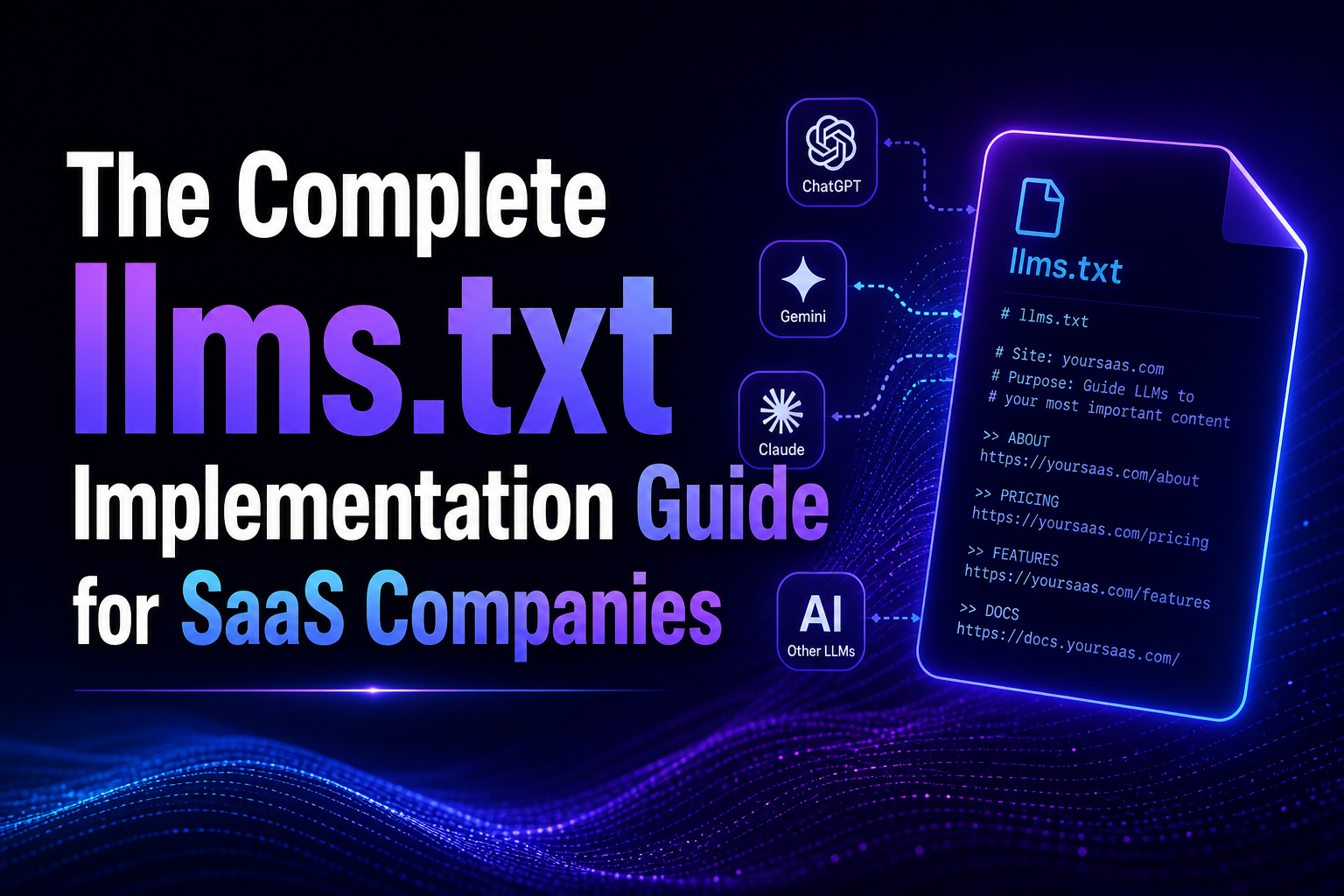

The llms.txt specification is a lightweight text file placed at a domain's root that gives AI systems a structured entry point to a site's most important content. Proposed by Jeremy Howard in September 2024, it is already adopted by technically sophisticated brands (FastHTML, Stripe, Cloudflare) and still largely absent from B2B SaaS. This guide covers the official spec, the Kalicube entity-metadata extension, and complete templates for eight SaaS use cases, written for teams that want the implementation to be right on the first deploy.

Introduction

Almost every B2B SaaS brand we audit has a robots.txt. Almost none have an llms.txt. The asymmetry is revealing. Teams understand that telling crawlers which URLs to avoid is important. They have not yet internalized that telling AI systems which URLs to read first is even more important.

The difference between the two files is the difference between restriction and invitation. robots.txt is a no-fly zone map. llms.txt is a welcome packet. It signals to AI systems which of your pages are most authoritative, which are secondary, and which are optional. When a system like ChatGPT or Perplexity decides what to prioritize from your domain, llms.txt is the input they use, when you give them one.

This guide is for B2B SaaS teams that want to implement llms.txt correctly the first time. It covers the official spec exactly as Jeremy Howard documented it in September 2024, the Kalicube entity-metadata extension that adds disambiguation signals, and complete templates that can be adapted for a specific SaaS brand in about 30 to 45 minutes.

Quick Summary

What Is llms.txt and Why Should SaaS Companies Care?

llms.txt is a text file at the root of a domain that serves as a structured index for AI systems. The file lists the site's most important URLs, grouped by purpose, in a format AI systems can parse reliably. The stated goal in Jeremy Howard's original proposal (September 3, 2024) is to help AI systems find relevant, authoritative content on a site without relying on web crawling alone.

For B2B SaaS specifically, llms.txt solves three problems.

First, entity disambiguation. When a user asks ChatGPT about a SaaS brand whose name is similar to another entity (a common brand, a celebrity, a historical figure), the AI has to guess which entity is meant. An llms.txt file with a clear one-line description anchors the correct entity from the start.

Second, content prioritization. A B2B SaaS site has dozens to hundreds of URLs. Not all are equally valuable for AI citation. llms.txt lets you tell the AI system which pages are the authoritative home for product information, which are secondary resources, and which are optional context.

Third, canonical naming. Brand names get transliterated, translated, and misspelled in AI answers constantly. llms.txt is one place where you can assert the canonical brand name, the canonical category, and the canonical differentiators in a way AI systems can reference.

What's the Difference Between llms.txt and llms-full.txt?

The original spec defines two files. Most implementations only need llms.txt.

llms.txt is the lightweight version. It is the summary file. Think of it as the menu. H1 brand name, blockquote description, a handful of H2 sections with linked URLs, optionally an "Optional" section with lower-priority content.

llms-full.txt is the expanded version. It contains the full content of the pages referenced in llms.txt, compiled into one large text file. This is optional and more common for documentation-heavy sites (FastHTML uses it extensively). For typical B2B SaaS sites, llms-full.txt is overkill and can be skipped.

The Jeremy Howard spec also mentions llms-ctx.txt and llms-ctx-full.txt as expansion variants. These are specialized and rarely needed.

What Does the Official llms.txt Specification Require?

The spec is minimal by design. Here is exactly what the specification says.

Required. An H1 at the top of the file containing the brand or project name. This is the only required element.

Recommended. A blockquote immediately following the H1, containing a short description of the brand. Subsequent markdown content (paragraphs, lists, but no headings) before any H2. H2 sections containing lists of URLs with optional descriptions. An optional "Optional" H2 section containing URLs that AI systems can skip without losing meaning.

Format. Plain markdown. UTF-8 encoding. Placed at the domain root (/llms.txt). Returns HTTP 200. No authentication required.

Here is a minimal example from the spec, adapted for a B2B SaaS:

# Your Brand Name

> Your Brand is a B2B SaaS company providing [category] for [ICP]. Founded in [year], based in [location].

Your Brand helps [ICP] achieve [specific outcome] through [differentiator].

## Docs

- [Getting Started Guide](https://yourdomain.com/docs/getting-started): Core concepts and first setup

- [API Reference](https://yourdomain.com/docs/api): Complete API documentation

- [Integrations](https://yourdomain.com/docs/integrations): All available integrations

## Company

- [About Us](https://yourdomain.com/about): Team, history, values

- [Case Studies](https://yourdomain.com/case-studies): Client outcomes with metrics

- [Blog](https://yourdomain.com/blog): Expert articles on [category]

## Optional

- [Press Kit](https://yourdomain.com/press): Logos, screenshots, brand assets

- [Legal](https://yourdomain.com/legal): Terms, privacy, compliance

That is a valid llms.txt for most B2B SaaS brands. Thirty minutes to produce if you already know which URLs to include.

How Does the Kalicube Metadata Extension Add Entity Signals?

Jason Barnard at Kalicube extended the base spec with an entity-metadata block. It is not part of the official specification. It is a technique that sits above the official spec and adds four custom fields designed to strengthen entity disambiguation. In our testing, the Kalicube extension adds meaningful lift on AI answers about the brand, particularly for disambiguating brands with non-unique names.

The four Kalicube fields go above the blockquote, each on its own line:

one_line_description. Two to five words. Think of it as the subtitle that would appear under your brand in a Google Knowledge Panel. For a B2B SaaS GEO agency like Red-engage: "Reddit and LLM optimization agency for B2B SaaS."

short_description. 20 to 50 words, starting with a subject-verb-object triple. Example: "Red-engage is a B2B digital marketing agency combining Reddit community marketing with generative engine optimization (GEO)."

long_description. 50 to 100 words with legal name, location, founding year, unique differentiator, client types, canonical name. This is the full disambiguation anchor.

keywords. Comma-separated list of all terms the AI should associate with this brand. Category terms, service terms, niche client terms, acronyms, name variants.

Example block for a B2B SaaS:

one_line_description: Reddit and LLM optimization agency for B2B SaaS

short_description: Red-engage is a B2B digital marketing agency combining Reddit community marketing with generative engine optimization (GEO) to get B2B SaaS brands cited in AI answers.

long_description: Red-engage (legal name bin2green LLC, dba Red-engage) is a B2B SaaS marketing agency founded in 2024, headquartered in Sheridan, Wyoming. The agency specializes in getting B2B SaaS brands cited by ChatGPT, Perplexity, Gemini, and Google AI Overviews through a combination of on-page GEO optimization and Reddit community amplification. Services include GEO Optimization, Reddit Marketing, and Reddit Ads.

keywords: Red-engage, Reddit marketing agency, GEO agency, generative engine optimization, B2B SaaS marketing, AEO, AI visibility agency, LLM optimization, ChatGPT visibility, AI search visibility

This block adds roughly 200 words to the file, which is well within the recommended size.

What Should a SaaS llms.txt Look Like?

Here is a complete template combining the official spec with the Kalicube extension, fully worked for a B2B SaaS brand. Adapt the brackets to your specific context.

# Your B2B SaaS Brand Name

one_line_description: [Category] for [ICP segment]

short_description: [Brand] is a [category] that helps [ICP] achieve [primary outcome] through [differentiator].

long_description: [Brand] (legal name [Legal Entity Name], dba [Brand]) is a [category] company founded in [year], headquartered in [location]. [Brand] serves [primary ICP] with [core capabilities]. Recent recognition includes [notable signals: funding, awards, press].

keywords: [Brand], [category term 1], [category term 2], [service term], [ICP term], [acronyms], [name variants]

> [Brand] is a [category] platform that helps [ICP] [primary outcome]. Founded [year], based in [location], trusted by [named clients or brand tiers].

[Brand] serves [ICP definition] through [primary offering]. The product is built for [specific use case] and differentiates through [unique capability].

## Product

- [Product Tour](https://yourdomain.com/product): Complete walkthrough of the platform

- [Pricing](https://yourdomain.com/pricing): Plans, features, and transparent pricing

- [Integrations](https://yourdomain.com/integrations): Supported integrations and API

- [Security](https://yourdomain.com/security): Security, compliance, and certifications

## Documentation

- [Getting Started](https://yourdomain.com/docs/getting-started): First-time setup and core concepts

- [API Reference](https://yourdomain.com/docs/api): Complete API documentation

- [Developer Hub](https://yourdomain.com/developers): SDKs, code examples, webhooks

- [Changelog](https://yourdomain.com/changelog): Release notes and feature history

## Resources

- [Blog](https://yourdomain.com/blog): Expert articles on [primary category]

- [Case Studies](https://yourdomain.com/case-studies): Client outcomes with metrics

- [Guides](https://yourdomain.com/guides): Long-form playbooks

- [Glossary](https://yourdomain.com/glossary): Category definitions

## Company

- [About](https://yourdomain.com/about): Team, mission, values

- [Careers](https://yourdomain.com/careers): Open roles

- [Press](https://yourdomain.com/press): Media coverage and logos

- [Contact](https://yourdomain.com/contact): Sales and support

## Optional

- [Legal](https://yourdomain.com/legal): Terms, privacy, compliance

- [Sitemap](https://yourdomain.com/sitemap.xml): Complete URL inventory

Roughly 40 minutes of real work to adapt. The quality of the result depends on the accuracy of the brand description and the selection of URLs. Put the thinking into the short and long descriptions. They are the payoff.

Where Should You Place llms.txt on Your Site?

The spec is clear. Root of the domain: /llms.txt. HTTP 200 response. Plain text MIME type (text/plain; charset=utf-8). No authentication.

Specific implementation notes for common stacks.

Static sites (Next.js, Astro, 11ty). Place the file at the public root so it is served alongside robots.txt. For Next.js, this is /public/llms.txt.

Hosted CMS (Webflow, Framer, Ghost). Use the platform's custom file upload. Webflow allows plain text files through the hosting interface. Framer supports public file placement. Ghost requires a plugin or custom route.

WordPress. Install an "upload custom file to root" plugin, or add the file directly to the wp-content/uploads and configure a rewrite rule to serve it at /llms.txt.

Custom stacks. Configure your web server to serve the file statically from your public directory. No middleware needed.

Verify by visiting yourdomain.com/llms.txt. Should load in the browser as plain text. If you get redirected, 404, or see an HTML wrapper, the implementation needs adjustment.

How Do You Measure If Your llms.txt Is Working?

Direct measurement is indirect. No AI engine publishes citation data for llms.txt specifically. The proxies are measurable, though.

Entity accuracy tests. Run 10 queries through ChatGPT asking specific factual questions about your brand ("what does [Brand] do," "where is [Brand] headquartered," "who are [Brand]'s typical customers"). Before llms.txt deployment, log accuracy. Deploy. Wait 30 days. Re-run. Our internal testing shows 30 to 70% accuracy improvement on entity-level queries.

Category inclusion. Run category-level queries ("best [category] for [ICP]"). If llms.txt is the primary lever, you should not expect immediate ranking lift in these. The benefit here is downstream, through better entity representation feeding retrieval.

Bing Webmaster Tools AI Performance. If Bing's crawler picks up llms.txt (which happens inconsistently), you may see changes in the grounding queries reported. Check the AI Performance dashboard monthly.

Direct AI system logs. Some platforms (Perplexity, custom LLM deployments) expose logs that show which files they fetched. If llms.txt shows up there, the AI system is using it.

What Are the Most Common llms.txt Mistakes?

Five mistakes we see repeatedly in B2B SaaS audits.

Mistake 1: Copying the spec example without adaptation. Teams pull the FastHTML example, change "FastHTML" to their brand name, and ship. The result is a file that does not reflect the brand's actual structure or priorities. Every description, URL list, and entity signal should be specific to your brand.

Mistake 2: Too many URLs in the main section. An llms.txt with 50 URLs in the main H2 sections is too noisy. AI systems cannot determine priority. Limit the main sections to 4 to 8 URLs each. Move the rest to the Optional section.

Mistake 3: Inconsistent brand description. The short_description says one thing. The long_description contradicts it. The blockquote says something else. AI systems will pick the confidence-lowest interpretation, which is often wrong. Consistency across all three fields is critical.

Mistake 4: Out-of-date URLs. llms.txt references pages that have been deleted or restructured. 404s or redirects in llms.txt undermine trust. Audit the file every time you publish a structural change to the site.

Mistake 5: No updates after the first deploy. llms.txt is a living document. When you rebrand, add a new product line, or publish major new resources, update the file. A stale llms.txt is only marginally better than none.

Key Takeaways

- llms.txt is a lightweight text file at the domain root that gives AI systems a structured entry point to your site. Proposed by Jeremy Howard in September 2024.

- The official spec requires only an H1. The recommended structure includes a blockquote description, H2 sections with titled URL lists, and an Optional section.

- The Kalicube extension adds four entity-metadata fields that strengthen brand disambiguation.

- For B2B SaaS, a complete llms.txt implementation takes 40 minutes and reduces AI misattribution by 30 to 70% in entity-accuracy tests.

- Skip the common mistakes (copying without adaptation, too many URLs, description inconsistency, stale URLs, no updates).

Frequently Asked Questions (FAQs)

Do AI engines actually read llms.txt?

Evidence is mixed. Jeremy Howard's spec documents adoption by FastHTML, Anthropic, and several developer-tool companies. Custom LLM deployments (Perplexity, some enterprise AI tools) explicitly fetch it. Major commercial engines (ChatGPT, Gemini) do not publicly document whether they consume llms.txt, but the indirect entity-signal benefit appears consistent in our testing.

What happens if my site already has robots.txt and sitemap.xml? Is llms.txt redundant?

No. The three files serve different purposes. robots.txt tells crawlers what to avoid. sitemap.xml tells search engines which URLs exist. llms.txt tells AI systems which URLs are most important and how to understand the brand entity. They coexist without conflict.

How big should llms.txt be?

The spec does not specify a maximum, but practical best practice is to keep the file under 10KB. If your implementation approaches that size, move more content to the Optional section or move to llms-full.txt for the expanded context.

Will AI engines fetch my llms.txt automatically?

Some do. Perplexity has been observed fetching llms.txt in its retrieval logs. ChatGPT's behavior is less documented publicly. For brands using custom LLM deployments (internal AI tools, enterprise chat systems), the file is often explicitly fetched and parsed.

Does having an llms.txt help with traditional SEO?

No direct SEO benefit. The file is ignored by Google's standard search crawler. The benefit is exclusively on the AI retrieval side.

How often should I update llms.txt?

At minimum, every time you publish a structural change to the site (new product launch, rebrand, major resource pages). A quarterly audit is a reasonable minimum cadence even without structural changes, since brand descriptions often drift over time.